Welcome again to this sequence in regards to the new HTTP/3 protocol. Partly 1, we checked out why precisely we’d like HTTP/3 and the underlying QUIC protocol, and what their most important new options are.

On this second half, we’ll zoom in on the efficiency enhancements that QUIC and HTTP/3 convey to the desk for web-page loading. We’ll, nonetheless, even be considerably skeptical of the influence we are able to count on from these new options in follow.

As we’ll see, QUIC and HTTP/3 certainly have nice net efficiency potential, however primarily for customers on sluggish networks. In case your common customer is on a quick cabled or mobile community, they in all probability received’t profit from the brand new protocols all that a lot. Nonetheless, be aware that even in nations and areas with sometimes quick uplinks, the slowest 1% to even 10% of your viewers (the so-called 99th or ninetieth percentiles) nonetheless stand to probably acquire lots. It is because HTTP/3 and QUIC primarily assist take care of the considerably unusual but probably high-impact issues that may come up on right this moment’s Web.

This half is a bit extra technical than the primary, although it offloads a lot of the actually deep stuff to outdoors sources, specializing in explaining why this stuff matter to the typical net developer.

This sequence is split into three components:

HTTP/3 historical past and core ideas

That is focused at folks new to HTTP/3 and protocols normally, and it primarily discusses the fundamentals.

HTTP/3 efficiency options (present article)

That is extra in depth and technical. Individuals who already know the fundamentals can begin right here.

Sensible HTTP/3 deployment choices (arising quickly!)

This explains the challenges concerned in deploying and testing HTTP/3 your self. It particulars how and should you ought to change your net pages and assets as effectively.

A Primer on Pace

Discussing efficiency and “pace” can shortly get complicated, as a result of many underlying facets contribute to a web-page loading “slowly”. As a result of we’re coping with community protocols right here, we’ll primarily have a look at community facets, of which two are most essential: latency and bandwidth.

Latency could be roughly outlined because the time it takes to ship a packet from level A (say, the shopper) to level B (the server). It’s bodily restricted by the pace of sunshine or, virtually, how briskly indicators can journey in wires or within the open air. Which means latency typically depends upon the bodily, real-world distance between A and B.

On earth, which means typical latencies are conceptually small, between roughly 10 and 200 milliseconds. Nonetheless, this is just one method: Responses to the packets additionally want to return again. Two-way latency is commonly referred to as round-trip time (RTT).

As a consequence of options resembling congestion management (see beneath), we’ll typically want fairly a number of spherical journeys to load even a single file. As such, even low latencies of lower than 50 milliseconds can add as much as appreciable delays. This is likely one of the most important explanation why content material supply networks (CDNs) exist: They place servers bodily nearer to the top person so as to cut back latency, and thus delay, as a lot as potential.

Bandwidth, then, can roughly be mentioned to be the variety of packets that may be despatched on the identical time. This is a little more tough to elucidate, as a result of it depends upon the bodily properties of the medium (for instance, the used frequency of radio waves), the variety of customers on the community, and likewise the gadgets that interconnect totally different subnetworks (as a result of they sometimes can solely course of a sure variety of packets per second).

An typically used metaphor is that of a pipe used to move water. The size of the pipe is the latency, whereas the width of the pipe is the bandwidth. On the Web, nonetheless, we sometimes have a lengthy sequence of linked pipes, a few of which could be wider than others (resulting in so-called bottlenecks on the narrowest hyperlinks). As such, the end-to-end bandwidth between factors A and B is commonly restricted by the slowest subsections.

Whereas an ideal understanding of those ideas isn’t wanted for the remainder of this publish, having a standard high-level definition can be good. For more information, I like to recommend testing Ilya Grigorik’s glorious chapter on latency and bandwidth in his ebook Excessive Efficiency Browser Networking.

Congestion Management

One side of efficiency is about how effectively a transport protocol can use a community’s full (bodily) bandwidth (i.e. roughly, what number of packets per second could be despatched or acquired). This in flip impacts how briskly a web page’s assets could be downloaded. Some declare that QUIC someway does this significantly better than TCP, however that’s not true.

Did You Know?

A TCP connection, for instance, doesn’t simply begin sending knowledge at full bandwidth, as a result of this might find yourself overloading (or congesting) the community. It is because, as we mentioned, every community hyperlink has solely a certain quantity of information it will probably (bodily) course of each second. Give it any extra and there’s no possibility apart from to drop the extreme packets, resulting in packet loss.

As mentioned in half 1, for a dependable protocol like TCP, the one solution to recuperate from packet loss is by retransmitting a brand new copy of the info, which takes one spherical journey. Particularly on high-latency networks (say, with an over 50-millisecond RTT), packet loss can severely have an effect on efficiency.

One other drawback is that we don’t know up entrance how a lot the most bandwidth will probably be. It typically depends upon a bottleneck someplace within the end-to-end connection, however we can not predict or know the place this will probably be. The Web additionally doesn’t have mechanisms (but) to sign hyperlink capacities again to the endpoints.

Moreover, even when we knew the obtainable bodily bandwidth, that wouldn’t imply we might use all of it ourselves. A number of customers are sometimes energetic on a community concurrently, every of whom want a justifiable share of the obtainable bandwidth.

As such, a connection doesn’t understand how a lot bandwidth it will probably safely or pretty deplete entrance, and this bandwidth can change as customers be a part of, go away, and use the community. To unravel this drawback, TCP will always attempt to uncover the obtainable bandwidth over time through the use of a mechanism referred to as congestion management.

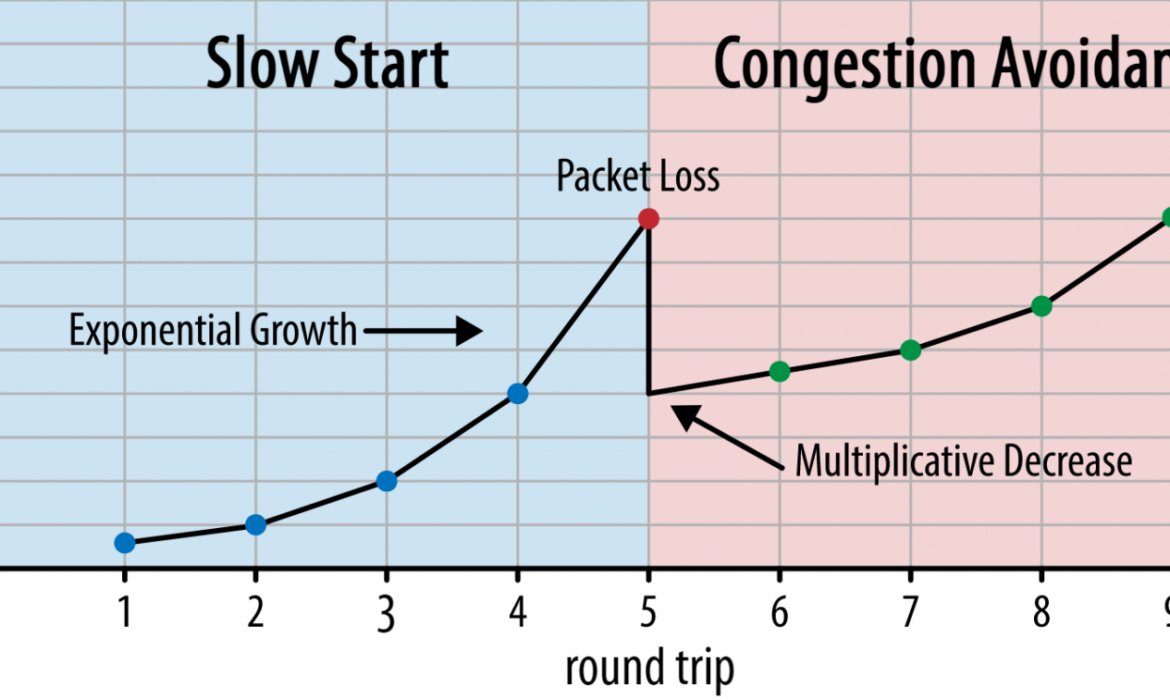

At the beginning of the connection, it sends only a few packets (in follow, ranging between 10 and 100 packets, or about 14 and 140 KB of information) and waits one spherical journey till the receiver sends again acknowledgements of those packets. If they’re all acknowledged, this implies the community can deal with that ship charge, and we are able to attempt to repeat the method however with extra knowledge (in follow, the ship charge often doubles with each iteration).

This fashion, the ship charge continues to develop till some packets should not acknowledged (which signifies packet loss and community congestion). This primary part is usually referred to as a “sluggish begin”. Upon detection of packet loss, TCP reduces the ship charge, and (after some time) begins to extend the ship charge once more, albeit in (a lot) smaller increments. This reduce-then-grow logic is repeated for each packet loss afterwards. Ultimately, which means TCP will always attempt to attain its perfect, truthful bandwidth share. This mechanism is illustrated in determine 1.

That is an extraordinarily oversimplified clarification of congestion management. In follow, many different components are at play, resembling bufferbloat, the fluctuation of RTTs because of congestion, and the truth that a number of concurrent senders have to get their justifiable share of the bandwidth. As such, many various congestion-control algorithms exist, and many are nonetheless being invented right this moment, with none performing optimally in all conditions.

Whereas TCP’s congestion management makes it strong, it additionally means it takes some time to attain optimum ship charges, relying on the RTT and precise obtainable bandwidth. For web-page loading, this slow-start strategy may have an effect on metrics resembling the primary contentful paint, as a result of solely a small quantity of information (tens of to some hundred KB) could be transferred within the first few spherical journeys. (You may need heard the advice to maintain your vital knowledge to smaller than 14 KB.)

Selecting a extra aggressive strategy might thus result in higher outcomes on high-bandwidth and high-latency networks, particularly should you don’t care in regards to the occasional packet loss. That is the place I’ve once more seen many misinterpretations about how QUIC works.

As mentioned in half 1, QUIC, in principle, suffers much less from packet loss (and the associated head-of-line (HOL) blocking) as a result of it treats packet loss on every useful resource’s byte stream independently. Moreover, QUIC runs over the Person Datagram Protocol (UDP), which, not like TCP, doesn’t have a congestion-control characteristic inbuilt; it permits you to strive sending at no matter charge you need and doesn’t retransmit misplaced knowledge.

This has led to many articles claiming that QUIC additionally doesn’t use congestion management, that QUIC can as a substitute begin sending knowledge at a a lot increased charge over UDP (counting on the removing of HOL blocking to take care of packet loss), that this is the reason QUIC is way quicker than TCP.

In actuality, nothing may very well be farther from the reality: QUIC truly makes use of very related bandwidth-management strategies as TCP. It too begins with a decrease ship charge and grows it over time, utilizing acknowledgements as a key mechanism to measure community capability. That is (amongst different causes) as a result of QUIC must be dependable so as to be helpful for one thing resembling HTTP, as a result of it must be truthful to different QUIC (and TCP!) connections, and since its HOL-blocking removing doesn’t truly assist in opposition to packet loss all that effectively (as we’ll see beneath).

Nonetheless, that doesn’t imply that QUIC can’t be (a bit) smarter about the way it manages bandwidth than TCP. That is primarily as a result of QUIC is extra versatile and simpler to evolve than TCP. As we’ve mentioned, congestion-control algorithms are nonetheless closely evolving right this moment, and we’ll probably have to, for instance, tweak issues to get essentially the most out of 5G.

Nonetheless, TCP is usually applied within the working system’s (OS’) kernel, a safe and extra restricted surroundings, which for many OSes isn’t even open supply. As such, tuning congestion logic is often solely accomplished by a choose few builders, and evolution is sluggish.

In distinction, most QUIC implementations are presently being accomplished in “person area” (the place we sometimes run native apps) and are made open supply, explicitly to encourage experimentation by a a lot wider pool of builders (as already proven, for instance, by Fb).

One other concrete instance is the delayed acknowledgement frequency extension proposal for QUIC. Whereas, by default, QUIC sends an acknowledgement for each 2 acquired packets, this extension permits endpoints to acknowledge, for instance, each 10 packets as a substitute. This has been proven to offer giant pace advantages on satellite tv for pc and really high-bandwidth networks, as a result of the overhead of transmitting the acknowledgement packets is lowered. Including such an extension for TCP would take a very long time to develop into adopted, whereas for QUIC it’s a lot simpler to deploy.

As such, we are able to count on that QUIC’s flexibility will result in extra experimentation and higher congestion-control algorithms over time, which might in flip even be backported to TCP to enhance it as effectively.

Did You Know?

The official QUIC Restoration RFC 9002 specifies using the NewReno congestion-control algorithm. Whereas this strategy is strong, it’s additionally considerably outdated and never used extensively in follow anymore. So, why is it within the QUIC RFC? The primary motive is that when QUIC was began, NewReno was the newest congestion-control algorithm that was itself standardized. Extra superior algorithms, resembling BBR and CUBIC, both are nonetheless not standardized or solely just lately turned RFCs.

The second motive is that NewReno is a comparatively easy set-up. As a result of the algorithms want a number of tweaks to take care of QUIC’s variations from TCP, it’s simpler to elucidate these modifications on an easier algorithm. As such, RFC 9002 must be learn extra as “easy methods to adapt a congestion-control algorithm to QUIC”, relatively than “that is the factor you need to use for QUIC”. Certainly, most production-level QUIC implementations have made customized implementations of each Cubic and BBR.

It bears repeating that congestion-control algorithms should not TCP- or QUIC-specific; they can be utilized by both protocol, and the hope is that advances in QUIC will ultimately discover their solution to TCP stacks as effectively.

Did You Know?

Notice that, subsequent to congestion management is a associated idea referred to as stream management. These two options are sometimes confused in TCP, as a result of they’re each mentioned to make use of the “TCP window”, though there are literally two home windows: the congestion window and the TCP obtain window. Movement management, nonetheless, comes into play lots much less for the use case of web-page loading that we’re excited about, so we’ll skip it right here. Extra in-depth data is obtainable.

What Does It All Imply?

QUIC continues to be sure by the legal guidelines of physics and the must be good to different senders on the Web. Which means it won’t magically obtain your web site assets far more shortly than TCP. Nonetheless, QUIC’s flexibility implies that experimenting with new congestion-control algorithms will develop into simpler, which ought to enhance issues sooner or later for each TCP and QUIC.

0-RTT Connection Set-Up

A second efficiency side is about what number of spherical journeys it takes earlier than you’ll be able to ship helpful HTTP knowledge (say, web page assets) on a brand new connection. Some declare that QUIC is 2 to even three spherical journeys quicker than TCP + TLS, however we’ll see that it’s actually just one.

Did You Know?

As we’ve mentioned in half 1, a connection sometimes performs one (TCP) or two (TCP + TLS) handshakes earlier than HTTP requests and responses could be exchanged. These handshakes trade preliminary parameters that each shopper and server have to know so as to, for instance, encrypt the info.

As you’ll be able to see in determine 2 beneath, every particular person handshake takes a minimum of one spherical journey to finish (TCP + TLS 1.3, (b)) and generally two (TLS 1.2 and prior (a)). That is inefficient, as a result of we’d like a minimum of two spherical journeys of handshake ready time (overhead) earlier than we are able to ship our first HTTP request, which implies ready a minimum of three spherical journeys for the primary HTTP response knowledge (the returning crimson arrow) to return in. On sluggish networks, this may imply an overhead of 100 to 200 milliseconds.

You could be questioning why the TCP + TLS handshake can not merely be mixed, accomplished in the identical spherical journey. Whereas that is conceptually potential (QUIC does precisely that), issues have been initially not designed like this, as a result of we’d like to have the ability to use TCP with and with out TLS on high. Put otherwise, TCP merely doesn’t assist sending non-TCP stuff in the course of the handshake. There have been efforts so as to add this with the TCP Quick Open extension; nonetheless, as mentioned in half 1, this has turned out to be tough to deploy at scale.

Fortunately, QUIC was designed with TLS in thoughts from the beginning, and as such does mix each the transport and cryptographic handshakes in a single mechanism. Which means the QUIC handshake will take just one spherical journey in complete to finish, which is one spherical journey lower than TCP + TLS 1.3 (see determine 2c above).

You could be confused, since you’ve in all probability learn that QUIC is 2 and even three spherical journeys quicker than TCP, not only one. It is because most articles solely contemplate the worst case (TCP + TLS 1.2, (a)), not mentioning that the trendy TCP + TLS 1.3 additionally “solely” take two spherical journeys ((b) isn’t proven). Whereas a pace increase of 1 spherical journey is good, it’s hardly superb. Particularly on quick networks (say, lower than a 50-millisecond RTT), this will probably be barely noticeable, though sluggish networks and connections to distant servers would revenue a bit extra.

Subsequent, you could be questioning why we have to look forward to the handshake(s) in any respect. Why can’t we ship an HTTP request within the first spherical journey? That is primarily as a result of, if we did, then that first request can be despatched unencrypted, readable by any eavesdropper on the wire, which is clearly not nice for privateness and safety. As such, we have to look forward to the cryptographic handshake to finish earlier than sending the primary HTTP request. Or can we?

That is the place a intelligent trick is utilized in follow. We all know that customers typically revisit net pages inside a short while of their first go to. As such, we are able to use the preliminary encrypted connection to bootstrap a second connection sooner or later. Merely put, someday throughout its lifetime, the primary connection is used to securely talk new cryptographic parameters between the shopper and server. These parameters can then be used to encrypt the second connection from the very begin, with out having to attend for the total TLS handshake to finish. This strategy known as “session resumption”.

It permits for a strong optimization: We are able to now safely ship our first HTTP request together with the QUIC/TLS handshake, saving one other spherical journey! As for TLS 1.3, this successfully removes the TLS handshake’s ready time. This methodology is commonly referred to as 0-RTT (though, in fact, it nonetheless takes one spherical journey for the HTTP response knowledge to start out arriving).

Each session resumption and 0-RTT are, once more, issues that I’ve typically seen wrongly defined as being QUIC-specific options. In actuality, these are literally TLS options that have been already current in some kind in TLS 1.2 and at the moment are totally fledged in TLS 1.3.

Put otherwise, as you’ll be able to see in determine 3 beneath, we are able to get the efficiency advantages of those options over TCP (and thus additionally HTTP/2 and even HTTP/1.1) as effectively! We see that even with 0-RTT, QUIC continues to be just one spherical journey quicker than an optimally functioning TCP + TLS 1.3 stack. The declare that QUIC is three spherical journeys quicker comes from evaluating determine 2’s (a) with determine 3’s (f), which, as we’ve seen, isn’t actually truthful.

The worst half is that when utilizing 0-RTT, QUIC can’t even actually use that gained spherical journey all that effectively because of safety. To grasp this, we have to perceive one of many explanation why the TCP handshake exists. First, it permits the shopper to make certain that the server is definitely obtainable on the given IP tackle earlier than sending it any higher-layer knowledge.

Secondly, and crucially right here, it permits the server to make it possible for the shopper opening the connection is definitely who and the place they are saying they’re earlier than sending it knowledge. In case you recall how we outlined a reference to the 4-tuple in half 1, you’ll know that the shopper is principally recognized by its IP tackle. And that is the issue: IP addresses could be spoofed!

Suppose that an attacker requests a really giant file by way of HTTP over QUIC 0-RTT. Nonetheless, they spoof their IP tackle, making it appear to be the 0-RTT request got here from their sufferer’s pc. That is proven in determine 4 beneath. The QUIC server has no method of detecting whether or not the IP was spoofed, as a result of that is the very first packet(s) it’s seeing from that shopper.

If the server then merely begins sending the big file again to the spoofed IP, it might find yourself overloading the sufferer’s community bandwidth (particularly if the attacker have been to do many of those faux requests in parallel). Notice that the QUIC response can be dropped by the sufferer, as a result of it doesn’t count on incoming knowledge, however that doesn’t matter: Their community nonetheless must course of the packets!

That is referred to as a reflection, or amplification, assault, and it’s a major method that hackers execute distributed denial-of-service (DDoS) assaults. Notice that this doesn’t occur when 0-RTT over TCP + TLS is getting used, exactly as a result of the TCP handshake wants to finish first earlier than the 0-RTT request is even despatched together with the TLS handshake.

As such, QUIC needs to be conservative in replying to 0-RTT requests, limiting how a lot knowledge it sends in response till the shopper has been verified to be an actual shopper and never a sufferer. For QUIC, this knowledge quantity has been set to 3 times the quantity acquired from the shopper.

Put otherwise, QUIC has a most “amplification issue” of three, which was decided to be a suitable trade-off between efficiency usefulness and safety threat (particularly in comparison with some incidents that had an amplification issue of over 51,000 instances). As a result of the shopper sometimes first sends only one to 2 packets, the QUIC server’s 0-RTT reply will probably be capped at simply 4 to six KB (together with different QUIC and TLS overhead!), which is considerably lower than spectacular.

As well as, different safety issues can result in, for instance, “replay assaults”, which restrict the kind of HTTP request you are able to do. For instance, Cloudflare solely permits HTTP GET requests with out question parameters in 0-RTT. These restrict the usefulness of 0-RTT much more.

Fortunately, QUIC has choices to make this a bit higher. For instance, the server can test whether or not the 0-RTT comes from an IP that it has had a legitimate reference to earlier than. Nonetheless, that solely works if the shopper stays on the identical community (considerably limiting QUIC’s connection migration characteristic). And even when it really works, QUIC’s response continues to be restricted by the congestion controller’s slow-start logic that we mentioned above; so, there’s no further large pace increase in addition to the one spherical journey saved.

Did You Know?

It’s attention-grabbing to notice that QUIC’s three-times amplification restrict additionally counts for its regular non-0-RTT handshake course of in determine 2c. This could be a drawback if, for instance, the server’s TLS certificates is simply too giant to suit inside 4 to six KB. In that case, it must be cut up, with the second chunk having to attend for the second spherical journey to be despatched (after acknowledgements of the primary few packets are available, indicating that the shopper’s IP was not spoofed). On this case, QUIC’s handshake would possibly nonetheless find yourself taking two spherical journeys, equal to TCP + TLS! That is why for QUIC, strategies resembling certificates compression will probably be further essential.

Did You Know?

It may very well be that sure superior set-ups are capable of mitigate these issues sufficient to make 0-RTT extra helpful. For instance, the server might bear in mind how a lot bandwidth a shopper had obtainable the final time it was seen, making it much less restricted by the congestion management’s sluggish begin for reconnecting (non-spoofed) shoppers. This has been investigated in academia, and there’s even a proposed extension in QUIC to do that. A number of firms already do any such factor to hurry up TCP as effectively.

An alternative choice can be to have shoppers ship a couple of or two packets (for instance, sending 7 extra packets with padding), so the three-times restrict interprets to a extra attention-grabbing 12- to 14-KB response, even after connection migration. I’ve written about this in certainly one of my papers.

Lastly, (misbehaving) QUIC servers might additionally deliberately enhance the three-times restrict in the event that they really feel it’s someway secure to take action or in the event that they don’t care in regards to the potential safety points (in any case, there’s no protocol police stopping this).

What does all of it imply?

QUIC’s quicker connection set-up with 0-RTT is basically extra of a micro-optimization than a revolutionary new characteristic. In comparison with a state-of-the artwork TCP + TLS 1.3 set-up, it might save a most of 1 spherical journey. The quantity of information that may truly be despatched within the first spherical journey is moreover restricted by various safety concerns.

As such, this characteristic will largely shine both in case your customers are on networks with very excessive latency (say, satellite tv for pc networks with greater than 200-millisecond RTTs) or should you sometimes don’t ship a lot knowledge. Some examples of the latter are closely cached web sites, in addition to single-page apps that periodically fetch small updates by way of APIs and different protocols resembling DNS-over-QUIC. One of many causes Google noticed superb 0-RTT outcomes for QUIC was that it examined it on its already closely optimized search web page, the place question responses are fairly small.

In different instances, you’ll acquire solely a few dozens of milliseconds at finest, even much less should you’re already utilizing a CDN (which you ought to be doing should you care about efficiency!).

Connection Migration

A 3rd efficiency characteristic makes QUIC quicker when transferring between networks, by maintaining present connections intact. Whereas this certainly works, any such community change doesn’t occur all that usually and connections nonetheless have to reset their ship charges.

As mentioned in half 1, QUIC’s connection IDs (CIDs) enable it to carry out connection migration when switching networks. We illustrated this with a shopper shifting from a Wi-Fi community to 4G whereas doing a big file obtain. On TCP, that obtain may need to be aborted, whereas for QUIC it’d proceed.

First, nonetheless, contemplate how typically that kind of situation truly occurs. You would possibly suppose this additionally happens when shifting between Wi-Fi entry factors inside a constructing or between mobile towers whereas on the street. In these set-ups, nonetheless (in the event that they’re accomplished accurately), your machine will sometimes maintain its IP intact, as a result of the transition between wi-fi base stations is completed at a decrease protocol layer. As such, it happens solely if you transfer between fully totally different networks, which I’d say doesn’t occur all that usually.

Secondly, we are able to ask whether or not this additionally works for different use instances in addition to giant file downloads and reside video conferencing and streaming. In case you’re loading an online web page on the precise second of switching networks, you may need to re-request among the (later) assets certainly.

Nonetheless, loading a web page sometimes takes within the order of seconds, in order that coinciding with a community swap can be not going to be quite common. Moreover, to be used instances the place it is a urgent concern, different mitigations are sometimes already in place. For instance, servers providing giant file downloads can assist HTTP vary requests to permit resumable downloads.

As a result of there’s sometimes some overlap time between community 1 dropping off and community 2 changing into obtainable, video apps can open a number of connections (1 per community), syncing them earlier than the outdated community goes away fully. The person will nonetheless discover the swap, however it received’t drop the video feed fully.

Thirdly, there isn’t any assure that the brand new community could have as a lot bandwidth obtainable because the outdated one. As such, regardless that the conceptual connection is stored intact, the QUIC server can not simply maintain sending knowledge at excessive speeds. As an alternative, to keep away from overloading the brand new community, it must reset (or a minimum of decrease) the ship charge and begin once more within the congestion controller’s slow-start part.

As a result of this preliminary ship charge is usually too low to actually assist issues resembling video streaming, you will note some high quality loss or hiccups, even on QUIC. In a method, connection migration is extra about stopping connection context churn and overhead on the server than about enhancing efficiency.

Did You Know?

Notice that, as mentioned for 0-RTT above, we are able to devise some superior strategies to enhance connection migration. For instance, we are able to, once more, attempt to bear in mind how a lot bandwidth was obtainable on a given community final time and try and ramp up quicker to that degree for a brand new migration. Moreover, we might envision not merely switching between networks, however utilizing each on the identical time. This idea known as multipath, and we focus on it in additional element beneath.

To date, now we have primarily talked about energetic connection migration, the place customers transfer between totally different networks. There are, nonetheless, additionally instances of passive connection migration, the place a sure community itself modifications parameters. A very good instance of that is community tackle translation (NAT) rebinding. Whereas a full dialogue of NAT is out of the scope of this text, it primarily implies that the connection’s port numbers can change at any given time, with out warning. This additionally occurs far more typically for UDP than TCP in most routers.

If this happens, the QUIC CID won’t change, and most implementations will assume that the person continues to be on the identical bodily community and can thus not reset the congestion window or different parameters. QUIC additionally contains some options resembling PINGs and timeout indicators to stop this from occurring, as a result of this sometimes happens for long-idle connections.

We mentioned in half 1 that QUIC doesn’t simply use a single CID for safety causes. As an alternative, it modifications CIDs when performing energetic migration. In follow, it’s much more difficult, as a result of each shopper and server have separate lists of CIDs, (referred to as supply and vacation spot CIDs within the QUIC RFC). That is illustrated in determine 5 beneath.

That is accomplished to enable every endpoint to decide on its personal CID format and contents, which in flip is essential to permitting superior routing and load-balancing logic. With connection migration, load balancers can not simply have a look at the 4-tuple to establish a connection and ship it to the right back-end server. Nonetheless, if all QUIC connections have been to make use of random CIDs, this is able to closely enhance reminiscence necessities on the load balancer, as a result of it might have to retailer mappings of CIDs to back-end servers. Moreover, this is able to nonetheless not work with connection migration, because the CIDs change to new random values.

As such, it’s essential that QUIC back-end servers deployed behind a load balancer have a predictable format of their CIDs, in order that the load balancer can derive the right back-end server from the CID, even after migration. Some choices for doing this are described within the IETF’s proposed doc. To make this all potential, the servers want to have the ability to select their very own CID, which wouldn’t be potential if the connection initiator (which, for QUIC, is all the time the shopper) selected the CID. That is why there’s a cut up between shopper and server CIDs in QUIC.

What does all of it imply?

Thus, connection migration is a situational characteristic. Preliminary checks by Google, for instance, present low share enhancements for its use instances. Many QUIC implementations don’t but implement this characteristic. Even people who do will sometimes restrict it to cellular shoppers and apps and never their desktop equivalents. Some individuals are even of the opinion that the characteristic isn’t wanted, as a result of opening a brand new reference to 0-RTT ought to have related efficiency properties usually.

Nonetheless, relying in your use case or person profile, it might have a big influence. In case your web site or app is most frequently used whereas on the transfer (say, one thing like Uber or Google Maps), you then’d in all probability profit greater than in case your customers have been sometimes sitting behind a desk. Equally, should you’re specializing in fixed interplay (be it video chat, collaborative modifying, or gaming), then your worst-case situations ought to enhance greater than if in case you have a information web site.

Head-of-Line Blocking Elimination

The fourth efficiency characteristic is meant to make QUIC quicker on networks with a excessive quantity of packet loss by mitigating the head-of-line (HoL) blocking drawback. Whereas that is true in principle, we’ll see that in follow this can in all probability solely present minor advantages for web-page loading efficiency.

To grasp this, although, we first have to take a detour and discuss stream prioritization and multiplexing.

Stream Prioritization

As mentioned in half 1, a single TCP packet loss can delay knowledge for a number of in-transit assets as a result of TCP’s bytestream abstraction considers all knowledge to be a part of a single file. QUIC, however, is intimately conscious that there are a number of concurrent bytestreams and may deal with loss on a per-stream foundation. Nonetheless, as we’ve additionally seen, these streams should not actually transmitting knowledge in parallel: Relatively, the stream knowledge is multiplexed onto a single connection. This multiplexing can occur in many various methods.

For instance, for streams A, B, and C, we’d see a packet sequence of ABCABCABCABCABCABCABCABC, the place we modify the energetic stream in every packet (let’s name this round-robin). Nonetheless, we’d additionally see the alternative sample of AAAAAAAABBBBBBBBCCCCCCCC, the place every stream is accomplished in full earlier than beginning the subsequent one (let’s name this sequential). In fact, many different choices are potential in between these extremes (AAAABBCAAAAABBC…, AABBCCAABBCC…, ABABABCCCC…, and many others.). The multiplexing scheme is dynamic and pushed by an HTTP-level characteristic referred to as stream prioritization (mentioned later on this article).

Because it seems, which multiplexing scheme you select can have a big impact on web site loading efficiency. You may see this within the video beneath, courtesy of Cloudflare, as each browser makes use of a unique multiplexer. The explanation why are fairly complicated, and I’ve written a number of tutorial papers on the subject, in addition to talked about it in a convention. Patrick Meenan, of Webpagetest fame, even has a three-hour tutorial on simply this subject.

Stream multiplexing variations can have a big influence on web site loading in several browsers. (Massive preview)

Fortunately, we are able to clarify the fundamentals comparatively simply. As you could know, some assets could be render blocking. That is the case for CSS information and for some JavaScript within the HTML head ingredient. Whereas these information are loading, the browser can not paint the web page (or, for instance, execute new JavaScript).

What’s extra, CSS and JavaScript information must be downloaded in full so as to be used (though they will typically be incrementally parsed and compiled). As such, these assets must be loaded as quickly as potential, with the best precedence. Let’s ponder what would occur if A, B, and C have been all render-blocking assets.

If we use a round-robin multiplexer (the highest row in determine 6), we’d truly delay every useful resource’s complete completion time, as a result of all of them have to share bandwidth with the others. Since we are able to solely use them after they’re totally loaded, this incurs a major delay. Nonetheless, if we multiplex them sequentially (the underside row in determine 6), we’d see that A and B full a lot earlier (and can be utilized by the browser), whereas not truly delaying C’s completion time.

Nonetheless, that doesn’t imply that sequential multiplexing is all the time the very best, as a result of some (largely non-render-blocking) assets (resembling HTML and progressive JPEGs) can truly be processed and used incrementally. In these (and another) instances, it is sensible to make use of the primary possibility (or a minimum of one thing in between).

Nonetheless, for most web-page assets, it seems that sequential multiplexing performs finest. That is, for instance, what Google Chrome is doing within the video above, whereas Web Explorer is utilizing the worst-case round-robin multiplexer.

Packet Loss Resilience

Now that we all know that every one streams aren’t all the time energetic on the identical time and that they are often multiplexed in several methods, we are able to contemplate what occurs if now we have packet loss. As defined in half 1, if one QUIC stream experiences packet loss, then different energetic streams can nonetheless be used (whereas, in TCP, all can be paused).

Nonetheless, as we’ve simply seen, having many concurrent energetic streams is usually not optimum for net efficiency, as a result of it will probably delay some vital (render-blocking) assets, even with out packet loss! We’d relatively have only one or two energetic on the identical time, utilizing a sequential multiplexer. Nonetheless, this reduces the influence of QUIC’s HoL blocking removing.

Think about, for instance, that the sender might transmit 12 packets at a given time (see determine 7 beneath) — keep in mind that that is restricted by the congestion controller). If we fill all 12 of these packets with knowledge for stream A (as a result of it’s excessive precedence and render-blocking — suppose most important.js), then we’d have just one energetic stream in that 12-packet window.

If a kind of packets have been to be misplaced, then QUIC would nonetheless find yourself totally HoL blocked as a result of there would merely be no different streams it might course of in addition to A: The entire knowledge is for A, and so the whole lot would nonetheless have to attend (we don’t have B or C knowledge to course of), just like TCP.

We see that now we have a form of contradiction: Sequential multiplexing (AAAABBBBCCCC) is usually higher for net efficiency, however it doesn’t enable us to take a lot benefit of QUIC’s HoL blocking removing. Spherical-robin multiplexing (ABCABCABCABC) can be higher in opposition to HoL blocking, however worse for net efficiency. As such, one finest follow or optimization can find yourself undoing one other.

And it will get worse. Up till now, we’ve form of assumed that particular person packets get misplaced one by one. Nonetheless, this isn’t all the time true, as a result of packet loss on the Web is typically “bursty”, which means that a number of packets typically get misplaced on the identical time.

As mentioned above, an essential motive for packet loss is {that a} community is overloaded with an excessive amount of knowledge, having to drop extra packets. That is why the congestion controller begins sending slowly. Nonetheless, it then retains rising its ship charge till… there’s packet loss!

Put otherwise, the mechanism that’s supposed to stop overloading the community truly overloads the community (albeit in a managed vogue). On most networks, that happens after fairly some time, when the ship charge has elevated to tons of of packets per spherical journey. When these attain the restrict of the community, a number of of them are sometimes dropped collectively, resulting in the bursty loss patterns.

Did You Know?

This is likely one of the explanation why we needed to maneuver to utilizing a single (TCP) reference to HTTP/2, relatively than the 6 to 30 connections with HTTP/1.1. As a result of every particular person connection ramps up its ship charge in just about the identical method, HTTP/1.1 might get a great speed-up firstly, however the connections might truly begin inflicting large packet loss for one another as they prompted the community to develop into overloaded.

On the time, Chromium builders speculated that this behaviour prompted a lot of the packet loss seen on the Web. That is additionally one of many explanation why BBR has develop into an typically used congestion-control algorithm, as a result of it makes use of fluctuations in noticed RTTs, relatively than packet loss, to evaluate obtainable bandwidth.

Did You Know?

Different causes of packet loss can result in fewer or particular person packets changing into misplaced (or unusable), particularly on wi-fi networks. There, nonetheless, the losses are sometimes detected at decrease protocol layers and solved between two native entities (say, the smartphone and the 4G mobile tower), relatively than by retransmissions between the shopper and the server. These often don’t result in actual end-to-end packet loss, however relatively present up as variations in packet latency (or “jitter”) and reordered packet arrivals.

So, let’s say we’re utilizing a per-packet round-robin multiplexer (ABCABCABCABCABCABCABCABC…) to get essentially the most out of HoL blocking removing, and we get a bursty lack of simply 4 packets. We see that this can all the time influence all 3 streams (see determine 8, center row)! On this case, QUIC’s HoL blocking removing offers no advantages, as a result of all streams have to attend for their very own retransmissions.

To decrease the danger of a number of streams being affected by a lossy burst, we have to concatenate extra knowledge for every stream. For instance, AABBCCAABBCCAABBCCAABBCC… is a small enchancment, and AAAABBBBCCCCAAAABBBBCCCC… (see backside row in determine 8 above) is even higher. You may once more see {that a} extra sequential strategy is best, regardless that that reduces the probabilities that now we have a number of concurrent energetic streams.

In the long run, predicting the precise influence of QUIC’s HoL blocking removing is tough, as a result of it depends upon the variety of streams, the dimensions and frequency of the loss bursts, how the stream knowledge is definitely used, and many others. Nonetheless, most outcomes at the moment point out it can not assist a lot for the use case of web-page loading, as a result of there we sometimes need fewer concurrent streams.

If you would like much more element on this subject or simply some concrete examples, please try my in-depth article on HTTP HoL blocking.

Did You Know?

As with the earlier sections, some superior strategies can assist us right here. For instance, trendy congestion controllers use packet pacing. Which means they don’t ship, for instance, 100 packets in a single burst, however relatively unfold them out over a complete RTT. This conceptually lowers the probabilities of overloading the community, and the QUIC Restoration RFC strongly recommends utilizing it. Complementarily, some congestion-control algorithms resembling BBR don’t maintain rising their ship charge till they trigger packet loss, however relatively again off earlier than that (by , for instance, RTT fluctuations, as a result of RTTs additionally rise when a community is changing into overloaded).

Whereas these approaches decrease the general probabilities of packet loss, they don’t essentially decrease its burstiness.

What does all of it imply?

Whereas QUIC’s HoL blocking removing means, in principle, that it (and HTTP/3) ought to carry out higher on lossy networks, in follow this depends upon loads of components. As a result of the use case of web-page loading sometimes favours a extra sequential multiplexing set-up, and since packet loss is unpredictable, this characteristic would, once more, probably have an effect on primarily the slowest 1% of customers. Nonetheless, that is nonetheless a really energetic space of analysis, and solely time will inform.

Nonetheless, there are conditions which may see extra enhancements. These are largely outdoors of the everyday use case of the primary full web page load — for instance, when assets should not render blocking, when they are often processed incrementally, when streams are fully impartial, or when much less knowledge is shipped on the identical time.

Examples embrace repeat visits on well-cached pages and background downloads and API calls in single-page apps. For instance, Fb has seen some advantages from HoL blocking removing when utilizing HTTP/3 to load knowledge in its native app.

UDP and TLS Efficiency

A fifth efficiency side of QUIC and HTTP/3 is about how effectively and performantly they will truly create and ship packets on the community. We’ll see that QUIC’s utilization of UDP and heavy encryption could make it a good bit slower than TCP (however issues are enhancing).

First, we’ve already mentioned that QUIC’s utilization of UDP was extra about flexibility and deployability than about efficiency. That is evidenced much more by the truth that, up till just lately, sending QUIC packets over UDP was sometimes a lot slower than sending TCP packets. That is partly due to the place and the way these protocols are sometimes applied (see determine 9 beneath).

As mentioned above, TCP and UDP are sometimes applied instantly within the OS’ quick kernel. In distinction, TLS and QUIC implementations are largely in slower person area (be aware that this isn’t actually wanted for QUIC — it’s largely accomplished as a result of it’s far more versatile). This makes QUIC already a bit slower than TCP.

Moreover, when sending knowledge from our user-space software program (say, browsers and net servers), we have to move this knowledge to the OS kernel, which then makes use of TCP or UDP to truly put it on the community. Passing this knowledge is completed utilizing kernel APIs (system calls), which includes a certain quantity of overhead per API name. For TCP, these overheads have been a lot decrease than for UDP.

That is largely as a result of, traditionally, TCP has been used much more than UDP. As such, over time, many optimizations have been added to TCP implementations and kernel APIs to cut back packet ship and obtain overheads to a minimal. Many community interface controllers (NICs) even have built-in hardware-offload options for TCP. UDP, nonetheless, was not as fortunate, as a result of its extra restricted use didn’t justify the funding in added optimizations. Up to now 5 years, this has fortunately modified, and most OSes have since added optimized choices for UDP as effectively.

Secondly, QUIC has loads of overhead as a result of it encrypts every packet individually. That is slower than utilizing TLS over TCP, as a result of there you’ll be able to encrypt packets in chunks (as much as about 16 KB or 11 packets at a time), which is extra environment friendly. This was a aware trade-off made in QUIC, as a result of bulk encryption can result in its personal types of HoL blocking.

Not like the primary level, the place we might add further APIs to make UDP (and thus QUIC) quicker, right here, QUIC will all the time have an inherent drawback to TCP + TLS. Nonetheless, that is additionally fairly manageable in follow with, for instance, optimized encryption libraries and intelligent strategies that enable QUIC packets headers to be encrypted in bulk.

Consequently, whereas Google’s earliest QUIC variations have been nonetheless twice as sluggish as TCP + TLS, issues have actually improved since. For instance, in latest checks, Microsoft’s closely optimized QUIC stack was capable of get 7.85 Gbps, in comparison with 11.85 Gbps for TCP + TLS on the identical system (so right here, QUIC is about 66% as quick as TCP + TLS).

That is with the latest Home windows updates, which made UDP quicker (for a full comparability, UDP throughput on that system was 19.5 Gbps). Essentially the most optimized model of Google’s QUIC stack is presently about 20% slower than TCP + TLS. Earlier checks by Fastly on a much less superior system and with a number of methods even declare equal efficiency (about 450 Mbps), exhibiting that relying on the use case, QUIC can positively compete with TCP.

Nonetheless, even when QUIC have been twice as sluggish as TCP + TLS, it’s not all that unhealthy. First, QUIC and TCP + TLS processing is usually not the heaviest factor occurring on a server, as a result of different logic (say, HTTP, caching, proxying, and many others.) additionally must execute. As such, you received’t really want twice as many servers to run QUIC (it’s a bit unclear how a lot influence it will have in an actual knowledge middle, although, as a result of not one of the large firms have launched knowledge on this).

Secondly, there are nonetheless loads of alternatives to optimize QUIC implementations sooner or later. For instance, over time, some QUIC implementations will (partially) transfer to the OS kernel (very similar to TCP) or bypass it (some already do, like MsQuic and Quant). We are able to additionally count on QUIC-specific {hardware} to develop into obtainable.

Nonetheless, there’ll probably be some use instances for which TCP + TLS will stay the popular possibility. For instance, Netflix has indicated that it in all probability received’t transfer to QUIC anytime quickly, having closely invested in customized FreeBSD set-ups to stream its movies over TCP + TLS.

Equally, Fb has mentioned that QUIC will in all probability primarily be used between finish customers and the CDN’s edge, however not between knowledge facilities or between edge nodes and origin servers, because of its bigger overhead. On the whole, very high-bandwidth situations will in all probability proceed to favour TCP + TLS, particularly within the subsequent few years.

Did You Know?

Optimizing community stacks is a deep and technical rabbit gap of which the above merely scratches the floor (and misses loads of nuance). In case you’re courageous sufficient or if you wish to know what phrases like GRO/GSO, SO_TXTIME, kernel bypass, and sendmmsg() and recvmmsg() imply, I can advocate some glorious articles on optimizing QUIC by Cloudflare and Fastly, in addition to an intensive code walkthrough by Microsoft, and an in-depth speak from Cisco. Lastly, a Google engineer gave a really attention-grabbing keynote about optimizing their QUIC implementation over time.

What does all of it imply?

QUIC’s specific utilization of the UDP and TLS protocols has traditionally made it a lot slower than TCP + TLS. Nonetheless, over time, a number of enhancements have been made (and can proceed to be applied) which have closed the hole considerably. You in all probability received’t discover these discrepancies in typical use instances of web-page loading, although, however they could offer you complications should you keep giant server farms.

HTTP/3 Options

Up till now, we’ve primarily talked about new efficiency options in QUIC versus TCP. Nonetheless, what about HTTP/3 versus HTTP/2? As mentioned in half 1, HTTP/3 is basically HTTP/2-over-QUIC, and as such, no actual, large new options have been launched within the new model. That is not like the transfer from HTTP/1.1 to HTTP/2, which was a lot bigger and launched new options resembling header compression, stream prioritization, and server push. These options are all nonetheless in HTTP/3, however there are some essential variations in how they’re applied beneath the hood.

That is largely due to how QUIC’s removing of HoL blocking works. As we’ve mentioned, a loss on stream B not implies that streams A and C should look forward to B’s retransmissions, like they did over TCP. As such, if A, B, and C every despatched a QUIC packet in that order, their knowledge would possibly effectively be delivered to (and processed by) the browser as A, C, B! Put otherwise, not like TCP, QUIC is not totally ordered throughout totally different streams!

It is a drawback for HTTP/2, which actually relied on TCP’s strict ordering within the design of a lot of its options, which use particular management messages interspersed with knowledge chunks. In QUIC, these management messages would possibly arrive (and be utilized) in any order, probably even making the options do the reverse of what was supposed! The technical particulars are, once more, pointless for this text, however the first half of this paper ought to offer you an concept of how stupidly complicated this may get.

As such, the interior mechanics and implementations of the options have needed to change for HTTP/3. A concrete instance is HTTP header compression, which lowers the overhead of repeated giant HTTP headers (for instance, cookies and user-agent strings). In HTTP/2, this was accomplished utilizing the HPACK set-up, whereas for HTTP/3 this has been reworked to the extra complicated QPACK. Each programs ship the identical characteristic (i.e. header compression) however in fairly alternative ways. Some glorious deep technical dialogue and diagrams on this subject could be discovered on the Litespeed weblog.

One thing related is true for the prioritization characteristic that drives stream multiplexing logic and which we’ve briefly mentioned above. In HTTP/2, this was applied utilizing a fancy “dependency tree” set-up, which explicitly tried to mannequin all web page assets and their interrelations (extra data is within the speak “The Final Information to HTTP Useful resource Prioritization”). Utilizing this technique instantly over QUIC would result in some probably very improper tree layouts, as a result of including every useful resource to the tree can be a separate management message.

Moreover, this strategy turned out to be needlessly complicated, resulting in many implementation bugs and inefficiencies and subpar efficiency on many servers. Each issues have led the prioritization system to be redesigned for HTTP/3 in a a lot less complicated method. This extra easy set-up makes some superior situations tough or not possible to implement (for instance, proxying visitors from a number of shoppers on a single connection), however nonetheless permits a variety of choices for web-page loading optimization.

Whereas, once more, the 2 approaches ship the identical primary characteristic (guiding stream multiplexing), the hope is that HTTP/3’s simpler set-up will make for fewer implementation bugs.

Lastly, there’s server push. This characteristic permits the server to ship HTTP responses with out ready for an express request for them first. In principle, this might ship glorious efficiency good points. In follow, nonetheless, it turned out to be onerous to make use of accurately and inconsistently applied. Consequently, it’s in all probability even going to be faraway from Google Chrome.

Regardless of all this, it _is_ nonetheless outlined as a characteristic in HTTP/3 (though few implementations assist it). Whereas its inside workings haven’t modified as a lot because the earlier two options, it too has been tailored to work round QUIC’s non-deterministic ordering. Sadly, although, this can do little to resolve a few of its longstanding points.

What does all of it imply?

As we’ve mentioned earlier than, most of HTTP/3’s potential comes from the underlying QUIC, not HTTP/3 itself. Whereas the protocol’s inside implementation is very totally different from HTTP/2’s, its high-level efficiency options and the way they will and must be used have stayed the identical.

Future Developments to Look Out For

On this sequence, I’ve often highlighted that quicker evolution and better flexibility are core facets of QUIC (and, by extension, HTTP/3). As such, it must be no shock that individuals are already engaged on new extensions to and purposes of the protocols. Listed beneath are the primary ones that you just’ll in all probability encounter someplace down the road:

Ahead error correction

This objective of this system is, once more, to enhance QUIC’s resilience to packet loss. It does this by sending redundant copies of the info (although cleverly encoded and compressed in order that they’re not as giant). Then, if a packet is misplaced however the redundant knowledge arrives, a retransmission is not wanted.

This was initially part of Google QUIC (and one of many explanation why folks say QUIC is sweet in opposition to packet loss), however it’s not included within the standardized QUIC model 1 as a result of its efficiency influence wasn’t confirmed but. Researchers at the moment are performing energetic experiments with it, although, and you’ll assist them out through the use of the PQUIC-FEC Obtain Experiments app.

Multipath QUIC

We’ve beforehand mentioned connection migration and the way it can assist when shifting from, say, Wi-Fi to mobile. Nonetheless, doesn’t that additionally suggest we’d use each Wi-Fi and mobile on the identical time? Concurrently utilizing each networks would give us extra obtainable bandwidth and elevated robustness! That’s the most important idea behind multipath.

That is, once more, one thing that Google experimented with however that didn’t make it into QUIC model 1 because of its inherent complexity. Nonetheless, researchers have since proven its excessive potential, and it’d make it into QUIC model 2. Notice that TCP multipath additionally exists, however that has taken virtually a decade to develop into virtually usable.

Unreliable knowledge over QUIC and HTTP/3

As we’ve seen, QUIC is a completely dependable protocol. Nonetheless, as a result of it runs over UDP, which is unreliable, we are able to add a characteristic to QUIC to additionally ship unreliable knowledge. That is outlined within the proposed datagram extension. You’d, in fact, not wish to use this to ship net web page assets, however it could be helpful for issues resembling gaming and reside video streaming. This fashion, customers would get all the advantages of UDP however with QUIC-level encryption and (elective) congestion management.

WebTransport

Browsers don’t expose TCP or UDP to JavaScript instantly, primarily because of safety considerations. As an alternative, now we have to depend on HTTP-level APIs resembling Fetch and the considerably extra versatile WebSocket and WebRTC protocols. The latest on this sequence of choices known as WebTransport, which primarily permits you to use HTTP/3 (and, by extension, QUIC) in a extra low-level method (though it will probably additionally fall again to TCP and HTTP/2 if wanted).

Crucially, it can embrace the flexibility to make use of unreliable knowledge over HTTP/3 (see the earlier level), which ought to make issues resembling gaming fairly a bit simpler to implement within the browser. For regular (JSON) API calls, you’ll, in fact, nonetheless use Fetch, which may also routinely make use of HTTP/3 when potential. WebTransport continues to be beneath heavy dialogue for the time being, so it’s not but clear what it can ultimately appear to be. Of the browsers, solely Chromium is presently engaged on a public proof-of-concept implementation.

DASH and HLS video streaming

For non-live video (suppose YouTube and Netflix), browsers sometimes make use of the Dynamic Adaptive Streaming over HTTP (DASH) or HTTP Stay Streaming (HLS) protocols. Each mainly imply that you just encode your movies into smaller chunks (of two to 10 seconds) and totally different high quality ranges (720p, 1080p, 4K, and many others.).

At runtime, the browser estimates the best high quality your community can deal with (or essentially the most optimum for a given use case), and it requests the related information from the server by way of HTTP. As a result of the browser doesn’t have direct entry to the TCP stack (as that’s sometimes applied within the kernel), it sometimes makes a number of errors in these estimates, or it takes some time to react to altering community situations (resulting in video stalls).

As a result of QUIC is applied as a part of the browser, this may very well be improved fairly a bit, by giving the streaming estimators entry to low-level protocol data (resembling loss charges, bandwidth estimates, and many others.). Different researchers have been experimenting with mixing dependable and unreliable knowledge for video streaming as effectively, with some promising outcomes.

Protocols apart from HTTP/3

With QUIC being a common objective transport protocol, we are able to count on many application-layer protocols that now run over TCP to be run on high of QUIC as effectively. Some works in progress embrace DNS-over-QUIC, SMB-over-QUIC, and even SSH-over-QUIC. As a result of these protocols sometimes have very totally different necessities than HTTP and web-page loading, QUIC’s efficiency enhancements that we’ve mentioned would possibly work significantly better for these protocols.

What does all of it imply?

QUIC model 1 is simply the beginning. Many superior performance-oriented options that Google had earlier experimented with didn’t make it into this primary iteration. Nonetheless, the objective is to shortly evolve the protocol, introducing new extensions and options at a excessive frequency. As such, over time, QUIC (and HTTP/3) ought to develop into clearly quicker and extra versatile than TCP (and HTTP/2).

Conclusion

On this second a part of the sequence, now we have mentioned the various totally different efficiency options and facets of HTTP/3 and particularly QUIC. We’ve seen that whereas most of those options appear very impactful, in follow they may not do all that a lot for the typical person within the use case of web-page loading that we’ve been contemplating.

For instance, we’ve seen that QUIC’s use of UDP doesn’t imply that it will probably immediately use extra bandwidth than TCP, nor does it imply that it will probably obtain your assets extra shortly. The customarily-lauded 0-RTT characteristic can be a micro-optimization that saves you one spherical journey, in which you’ll be able to ship about 5 KB (within the worst case).

HoL blocking removing doesn’t work effectively if there’s bursty packet loss or if you’re loading render-blocking assets. Connection migration is extremely situational, and HTTP/3 doesn’t have any main new options that would make it quicker than HTTP/2.

As such, you would possibly count on me to advocate that you just simply skip HTTP/3 and QUIC. Why hassle, proper? Nonetheless, I’ll most positively do no such factor! Although these new protocols may not help customers on quick (city) networks a lot, the brand new options do actually have the potential to be extremely impactful to extremely cellular customers and other people on sluggish networks.

Even in Western markets resembling my very own Belgium, the place we typically have quick gadgets and entry to high-speed mobile networks, these conditions can have an effect on 1% to even 10% of your person base, relying in your product. An instance is somebody on a practice making an attempt desperately to search for a vital piece of knowledge in your web site, however having to attend 45 seconds for it to load. I actually know I’ve been in that state of affairs, wishing somebody had deployed QUIC to get me out of it.

Nonetheless, there are different nations and areas the place issues are a lot worse nonetheless. There, the typical person would possibly look much more just like the slowest 10% in Belgium, and the slowest 1% would possibly by no means get to see a loaded web page in any respect. In many components of the world, net efficiency is an accessibility and inclusivity drawback.

That is why we must always by no means simply take a look at our pages on our personal {hardware} (but additionally use a service like Webpagetest) and likewise why you need to positively deploy QUIC and HTTP/3. Particularly in case your customers are sometimes on the transfer or unlikely to have entry to quick mobile networks, these new protocols would possibly make a world of distinction, even should you don’t discover a lot in your cabled MacBook Professional. For extra particulars, I extremely advocate Fastly’s publish on the problem.

If that doesn’t totally persuade you, then contemplate that QUIC and HTTP/3 will proceed to evolve and get quicker within the years to return. Getting some early expertise with the protocols will repay down the street, permitting you to reap the advantages of recent options as quickly as potential. Moreover, QUIC enforces safety and privateness finest practices within the background, which profit all customers in all places.

Lastly satisfied? Then keep tuned for half 3 of the sequence to examine how one can go about utilizing the brand new protocols in follow.

This sequence is split into three components:

HTTP/3 historical past and core ideas

That is focused at folks new to HTTP/3 and protocols normally, and it primarily discusses the fundamentals.

HTTP/3 efficiency options (present article)

That is extra in depth and technical. Individuals who already know the fundamentals can begin right here.

Sensible HTTP/3 deployment choices (arising quickly!)

This explains the challenges concerned in deploying and testing HTTP/3 your self. It particulars how and should you ought to change your net pages and assets as effectively.

Subscribe to MarketingSolution.

Receive web development discounts & web design tutorials.

Now! Lets GROW Together!