Have You Been Hacked? How to Clean Your Site and Get Off Google’s Blocklist

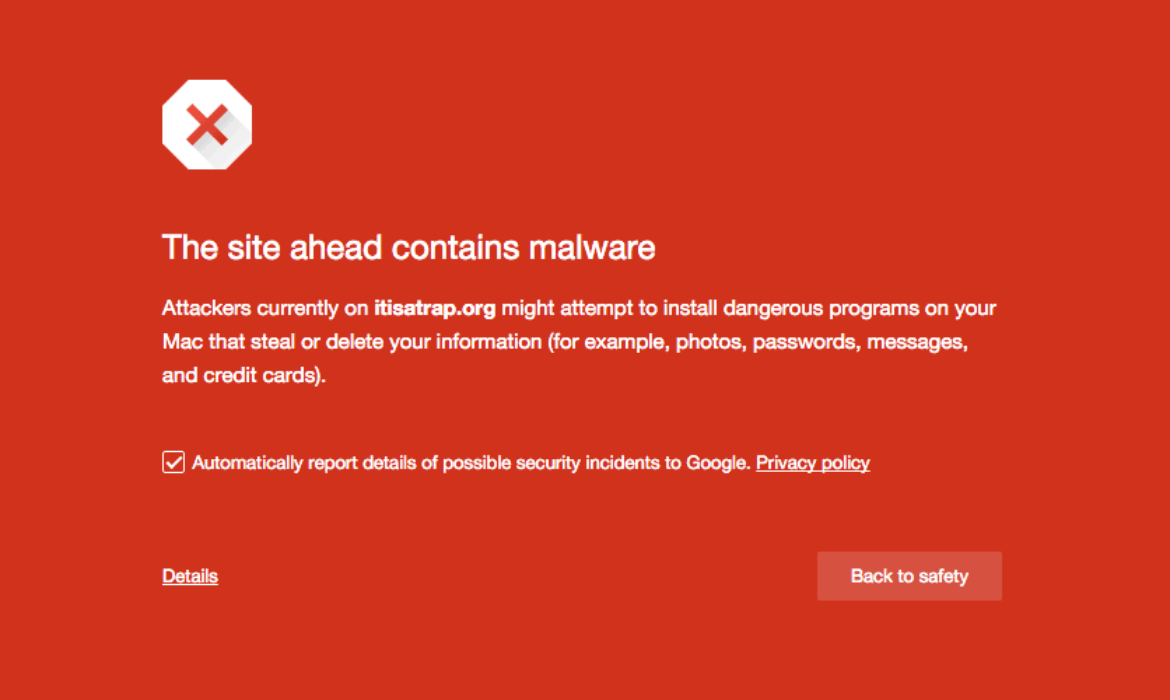

So, did it hurt? When you landed at the bottom of the SERPs, I mean, and Google slapped a scary red warning message on your site telling people to keep out.

If this happened due to an error on your part (bad SEO, shady linking tactics, etc) that’s one thing.

But if your site was hacked and now contains malicious code, that’s just adding insult to injury – and can really damage your reputation.

Unfortunately, that’s just one of the risks of being in charge of your own site maintenance. Stuff like this can happen.

Sure, it’s fantastic to build your own site in WordPress, but as Spiderman says, with great power comes great responsibility.

To put it plainly, you have control over how your site looks, but you’re also in control when/if your site runs into problems.

If you’re hacked, you will probably get blocklisted by Google. Period. Google isn’t going to take any chances with its reputation.

So, if your site smells even the slightest bit fishy, the search engine is going to blocklist you, knock you from your spot in the rankings that you’ve worked so hard for, send your site plummeting in the SERPs and tell anyone who lands on your site to stay away because it’s dangerous.

And that’s a real bummer. But the key is knowing what to do next.

Should you find yourself on Google’s Blocklist (or you’re a bit fuzzy on what the blocklist even is), we’ve put together a comprehensive step-by-step guide to getting it handled ASAP.

(Click here to go straight to the step-by-step-guide)

Google Blocklist: A Definition

A website that has been blocklisted by Google will generally experience a dramatic drop in organic search traffic.

It’s sudden and huge, and when your Analytics graph inverts sharply, it’s usually the biggest clue to a webmaster that something bad has happened.

How Did I Get on the Blocklist?

There are several ways your site might’ve got on the blocklist. But generally speaking, when a search engine finds suspicious code or activity on your site that its internal algorithms determine to be malware, it will remove the site from search results immediately.

Instead of risking the integrity of the search results and their safety for users, removing the questionable site is the least resource-intensive action the search engine can take.

Now, what is malware exactly?

In this case, it can be anything that Google deems suspicious, including phishing schemes, hacks, information or email address scrapers, trojan horses, and more.

The sad thing here is that you won’t even know your site has been hacked the vast majority of the time until your organic search traffic falls off a cliff.

In some cases, however, there will be tell-tale signs that something is amiss.

This can either come in the form of suspicious things you come across yourself or through warnings, shutdowns, or other actions taken by external sources.

Of course, there are occasions where the webmaster is responsible for the blocklisting.

Things you should never do if you want to avoid the blocklist include:

- Violating Copyright or DMCA Claims: Stealing content is frowned upon by the world. Don’t do it.

- Keyword Masking: Hiding keywords by making the text the same color as the site’s background is so 1998. If Google finds out, you’ll be de-indexed quick.

- Linking to Spammy Sites: Be mindful of where you link to. It should be a priority of yours to link only to high-quality sites.

However, a lot of the time, hackers will implement these link baiting and keyword spam schemes as a part of infecting your site with malware.

Regardless of whodunnit, however, Google will treat affected sites the same way: with a swift and thorough blocklisting.

What Does Blocklisting Look Like?

Blocklisting is fairly obvious when it happens.

Your analytics will take a nosedive, as I mentioned above. Or, if you do a simple Google search for “site:yoursitehere.com” and no results are found (assuming your site has already been indexed), chances are really good that your site has been blocklisted. This is one of the manual ways to check for blocklisting.

Another way to check for blocklisting is to regularly access and review data in Google Search Console.

This makes it easy for you to see what sites link to you, what search queries you’re ranked for, 404s, server errors, and overall site health.

Any funny business happening with your site is likely to show up here before your site is blocklisted, so keeping a watchful eye is really important when attempting to maintain the integrity of your site.

Security plugins can also be a great tool to help determine if your site has been hacked and/or blocklisted.

A Plugin to the Rescue

When it comes to a plugin, our very own answer to security, Defender, can stop brute force attacks, SQL injections, cross-site scripting XSS, and more vulnerabilities that will prevent you from getting hacked and blocklisted in the first place.

Defender can also scan your site and track down malicious code. If there’s malicious code detected, Defender shows you exactly what it is and the locations. You can then delete it in one click.

Be sure to read our article about stopping hackers in their tracks, so you don’t even have to worry about getting blocklisted by Google to begin with and try Defender out for yourself for free.

Security Warnings & Diagnostics: A Primer

So, we’ve already talked about preventative measures and the ways you can check to see if your site has been blocklisted, but I feel like it’s a good idea to spend additional time talking about what some refer to as the “symptoms” of being blocklisted.

Not every blocklisted site will exhibit these features, but this is a good rundown of what to look for:

- There is sudden traffic to your site for keywords that have nothing to do with your site’s content—particularly related to pharmaceuticals.

- Your site suddenly redirects to another site not in your possession.

- New administrators or users appear in your site’s dashboard that wasn’t created by you or anyone with authorized admin access.

- Your site is suddenly flagged as potentially containing malware in search engine results and by desktop or mobile anti-virus detection software.

- Your web host shuts down your site.

It’s important to note the various security warnings Google can provide as well. While these aren’t technically blocklisting, they can sometimes indicate your site is well on its way to being blocklisted.

Should you be fortunate enough to catch suspicious activity thanks to a security warning, you may be able to sidestep the headache of being blocklisted altogether.

These warnings appear on the search engine result page where your site is listed. They can also take a couple of different forms. Here are two of the most common warnings you’ll come across:

This site may harm your computer

This warning occurs when Google believes your site contains a Trojan or other piece of code that triggers a download prompt that is malicious.

Those fake anti-virus pop-ups and automatic file downloads are the most common examples of what Google is referring to when it displays this warning.

This site may be hacked

This gets to the point, doesn’t it? This warning displays when Google has reason to believe your site has been completely hacked and taken over by someone other than you.

The sudden appearance of content that doesn’t belong with the rest of your site, bank directories, and other red flags trigger this warning.

Other Blocklists

While this article focuses on getting off Google’s blocklist, it’s worth noting there are other blocklists that may pick up on malicious content or security threats on your site.

These are some of the main blocklists:

- Norton Safe Web

- Phish Tank

- Opera

- SiteAdvisor McAfee

- Yandex (via Sophos)

- ESET

If Google reports your site as clean, it is still possible for Opera (the browser, that is) or even Yandex (the search engine) to blocklist your site.

So if you do notice a drop in SERPs or security warnings displaying in browsers other than Chrome, it’s a good idea to check these other blocklists to see if your site has been compromised.

A Step-by-Step Guide for Getting off the Google Blocklist

Now that you’re all clear on what blocklisting is, how to tell if it’s happened, and what the warning signs are that you might be headed for the blocklist, we can start discussing how to get your site off it for good.

Step 1: Check if you’re blocklisted

Should go without saying, but you need to be 100% sure if your site has been blocklisted before you move forward.

- Check your site’s status to determine safe browsing. Just input your site’s URL and review the results.

- Use Google Search Console’s URL inspection tool to see what Googlebot sees when accessing your site.

Step 2: Locate the Suspicious Code

There are many different places you can look on your site to find malware.

As mentioned before, the simplest way of finding malware is with a resource like our Defender plugin.

If you’re not using a plugin like Defender, it’s not always so easy and scanning through the code on each page, however, sometimes the culprit is embedded in your server somewhere.

Still, there are a few places that hackers target more than others. You will need FTP access to get to some of these areas to start cleaning up the mess.

If your site is suddenly redirecting to another site, you should check the following areas for suspicious code:

- Core WordPress files

- Your site’s index (check both .php and .html!)

- .htaccess

If your site is now triggering downloads for visitors, check out the following spots:

- Header

- Footer

- Index (check both .php and HTML)

- Your theme’s files

If you’re suddenly seeing a bunch of Pharma information on your site and believe it’s been compromised by a phishing campaign, check:

- Any HTML file

- Index .php and .html

- For the appearance of new directories you didn’t create

You can also leverage the Google Diagnostic Page to figure out specifically what part of your site has been compromised. Is it just one page? One directory? Or the whole site?

Keep reading through the results to see when Google last visited your site.

This is referred to as the “scan date.” Also, take note of when Google found malware or suspicious content. This is referred to as the “discovery date.”

Now, if you’ve tried to fix your site after the last “scan date,” Google doesn’t know about it yet. Patience is a requirement when getting your site off the blocklist, unfortunately.

You can bring Google’s attention to your attempts to fix the issues, but we’ll talk more about that later.

Note: Sometimes, Google Search Console will show that certain HTML pages of your site have been infected, but this isn’t necessarily the case. When dealing with WordPress, likely, the core file responsible for generating the HTML file in question is infected.

Step 3: Dig Deeper: Pretend You’re a Bot or User Agent

Sometimes running tests to see if your site (or a client’s) is infected would put your own computer at risk.

You couldn’t just open up your web browser and load the site directly without putting your machine in danger.

So, to bypass this, you can use cURL in the command-line interface (CLI) to basically pretend you are a Google bot or a user agent.

For example, you would input the following to emulate a bot:

$ curl –location -D – -A “Googlebot” somesite.com

Once you input this, you’re going to want to look for anything that doesn’t make sense in the code.

So, bits that are in a different language than your own or content that looks like total gibberish.

Yes, you’ll need to understand HTML, at the very least, here. Anything in an iframe or script tag should get your careful attention, too.

You can also use this bit of code to emulate a user-agent:

$ curl -A “Mozilla/5.0 (compatible; MSIE 7.01; Windows NT 5.0)” http://www.somesite.com

You can swap out what browser is referenced here depending on your needs.

A few other commands you might want to get familiar with include Grep, Find, and SSH.

These will help you locate specifically where the hacking took place on your site, so you manually remove the code that put you on the blocklist.

If the CLI stuff is leaving you scratching your head, here’s a list of resources you can use to get up to speed on the terminal and the specific commands you’ll need to clean your site:

- Command line

- Taming the Terminal

- How to Use cURL

- How to use grep command in Unix and Linux with examples

- How to Use Find from the Windows Command Prompt

Once you locate the source of the problem, you can remove it.

Or, if you’re a WPMU DEV member and have any questions about specific code and whether it’s an issue, contact our 24/7 support, and we’ll help you out.

Step 4: Removing Bad Code

If your site has been hacked, you’ll need to remove the malware that caused the blocklisting and/or security warnings.

If the hackers created new pages with malicious code, you could remove them from the SERPs altogether by going to the Search Console and using the Remove URLs feature.

You’ll also want to delete the pages in question from your server, but using Remove URLs can help expedite Google’s awareness of your cleanup attempt.

Again, I’ll refer back to Defender here as a simple solution. You can easily remove suspicious code in one-click with his help.

One thing to keep in mind is Defender does not scan the DB tables in cases where a Pharma hack has already happened. In this case, infected content on post pages needs to be cleared manually.

Remember, you shouldn’t use Remove URLs for pages you want to be indexed but have bad code. This is a feature you should only use when a page should disappear from search results for good.

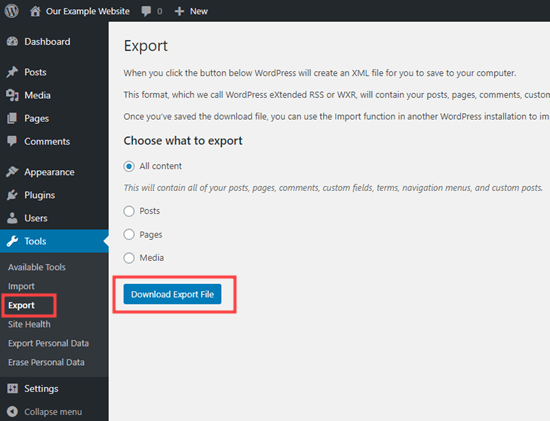

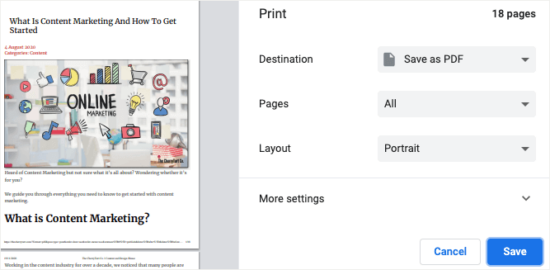

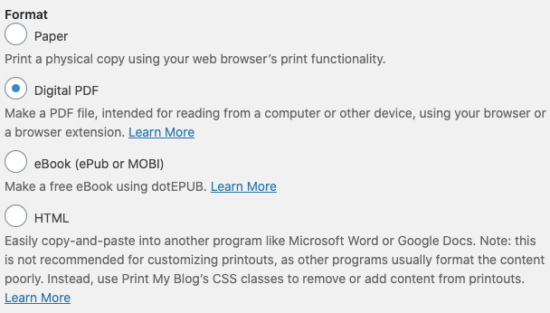

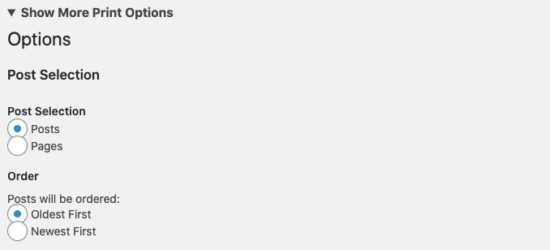

To remove all evidence of your site’s hacking, you’ll need to backup from an older version of your site.

Regular backups are super important for this very reason, so hopefully, you have a clean version of your site on file to use. This is the first step in cleaning your site’s server.

Next, install any new core, theme, and plugin updates that are available. Make sure everything is as up to date as possible. This will reduce your site’s vulnerabilities.

Follow best practices for site security here (limit the number of plugins you use, delete outdated themes you no longer use, old user accounts, etc).

Finally, change all the passwords for your site. And I mean all of them. Not just the WordPress administrator and user passwords.

You also need to change the passwords for your FTP account, database(s), hosting, and anything else related to your site to ensure security.

If the version of the site you’re restoring from the backup is way out of date, you should make a disk image of your infected but current site before installing the clean outdated version.

Once you install updates and change passwords, you’ll need to restore the new content manually. Google offers some pointers on how to accomplish this.

Step 5: Resubmit Your Site

If your site has been blocklisted, it’s been removed from the search results. To get back in the SERPs, you’ll need to submit your site for review.

Otherwise, Google won’t know that you’ve taken steps to remedy the problem (or, at least, won’t crawl across your squeaky clean site for a long time).

And every day your site is out of the SERPs is money lost, right? So to speed things up, you have to go through a couple of official channels.

If your site was infected with malware or was involved in phishing, you’ll need to submit a reconsideration request via Google Search Console.

The steps required to submit a review depend on your specific security issue/situation, But luckily GSC has kindly outlined the full review and reconsideration process right here.

Once you’ve completed the review process, if Google finds your site is clean, warnings from browsers and search results should be removed within 72 hours.

You should also verify your site works as expected: pages load properly and links are clickable.

If your request is NOT approved, reassess your site for malware or spam, or for any modifications or new files created by the hacker.

Alternatively, you might consider requesting more help from specialists (WPMU DEV’s superhero support team is a great place to start!)

Have You Ever Been Hacked?

The process of cleaning up after being hacked and getting on Google’s blocklist can be arduous at best, I’m not going to lie.

But if you lay out a plan or create a checklist for the steps to take, you can tick them off little by little until your site is clean, back online, and back in the SERPs.

Plus, you can prevent hacking in the first place. Check out our article on stopping Hackers in their tracks with Defender.

It’ll take some effect, but the important thing is you’ll restore your site’s reputation.

And if anything, it’ll allow you to prioritize security in a way that you might not have thought about before.

Small silver lining?

Join Us For Smashing Meets Happy Holidays

If you are missing your festive meetups this year or just fancy seeing some friendly faces and learning some new things join us on December 17th for another Smashing Meets event.

Tickets are only 10 USD (and free for our lovely Smashing Members). The fun starts at 9AM ET (Eastern Time) or 15:00 CET (Central European Time) on the 17th December.

Ok. This is important. Smashing Meets by @smashingconf was soooo much fun. I will have to tune in whenever the timezone suits, it was an absolute blast!!!

— Mandy Michael (@Mandy_Kerr) May 19, 2020

This time, we will have talks from three speakers—Adekunle Oduye, Ben Hong, and Michelle Barker. There will be an interface design challenge and chance to network and meet other attendees. Just like an in-person meetup but you won’t have to go out in the cold!

If you want to know more about how our Smashing Meets events work, we have a review of a previous event, see some of the videos, or just head on over to the event page and get a ticket! I hope to see you there.

We Need You In The Smashing Family

At Smashing, we are looking for a friendly, reliable and passionate person to drive the sales and management of sponsorship and advertising. We work with small and big companies to help them get exposure and have their voice heard across a number of different media — from this very magazine to our online conferences, meet-ups and workshops. This includes:

- Smashing Magazine — display advertising and sponsored articles.

- Smashing Conferences — online and in-person.

- Smashing Podcast — published once every 2 weeks.

- Smashing Online Workshops — happening every week.

We sincerely hope to find someone who knows and understands the web community we publish for. A person who is able to bring onboard advertisers and sponsors that will be helpful to our audience, and who will benefit from the exposure and visibility at Smashing. We are looking for a person with experience in nurturing long-term relationships with advertisers, while not being afraid to push for new sales.

We are a small family of 12, and we’ve all been working remotely for years now. By joining our team, you will have the opportunity to shape the role and work with the Magazine as well as the Events team to create sponsorship opportunities that truly benefit both sides of the arrangement. We also would be open to outsourcing this work to another company or working with someone on a freelance basis who provides these services to other companies.

What’s In It For You?

- A small, friendly, inclusive and diverse team that is aligned and very committed to doing great work;

- The ability to shape your work in a way that would work best for you;

- No lengthy meetings or micro-management: we do everything to ensure you can do your best work.

Role And Responsibilities

- You’ll be working with your existing contacts (those of which Smashing has already made) and find new contacts to sell advertising and sponsorship across the range of our products;

- You’ll be managing sponsors and advertisers once they come on board, ensuring that expectations are managed and deadlines on both sides understood;

- You’ll be exploring creative partnerships to ensure that sponsors get exposure they need while staying true to principles that Smashing stands for;

- Work closely with the our team to ensure that our commitments to sponsors are possible to fulfill given time and team availability;

- Being able to think creatively in terms of how we maximize sponsorship opportunities across our different outlets.

We’d Like You To:

- Have good written English, and ability to communicate clearly with sponsors from around the world;

- Be able to manage a flexible schedule in order to make calls to sponsors in timezones including the US West Coast;

- Be happy working in an asynchronous way, mostly via writing (we use Slack and Notion), given the distributed nature of the team and sponsors;

- Be conversant with web technologies to the extent of understanding who would be a good fit as a sponsor;

- Ideally, have existing connections with web companies;

- Fully remote, and probably fulltime. (Again, we also would be open to outsourcing this work to another company or working with someone on a freelance basis who provides these services to other companies.)

A Bit About Smashing

At Smashing, we focus on bringing quality content for web designers and developers, and support our community. The community around Smashing is indeed very important to us. They tell us when they like what we are doing, and also when they do not!

We are always looking for new ways to reach out to our community. Over the past year, we’ve taken conferences online and started running online workshops in response to the pandemic. Things will likely change over the coming year too, and we are keen to bring our existing sponsors along with us and continue to think creatively about how we can offer good value to them in a changing world.

Yet again, we are a very small team of dedicated people — fully distributed even before the pandemic. The majority of the team is in Europe, but we also have team members in the USA and Hong Kong. Therefore, location tends to be less important than an ability to work in a way that respects the time lag when dealing with multiple time zones.

Contact Details

If you are interested, please drop us an email at recruiting@smashing-media.com, tell us a bit about yourself and your experience, and why you’d like to be a part of the Smashing family. We can’t wait to hear from you!

New from WordPress.com Courses: Podcasting for Beginners

Would you like to learn how to create your own podcast or improve your existing podcast? WordPress.com Courses is excited to offer our new on-demand course, Podcasting for Beginners. We’ll help you get started, learn how to publish, and even how to use your podcast to make a living.

Our courses are flexible. You can join, and learn at your own pace. But that’s just the start. Podcasting for Beginners is more than just a course — it’s a community that gives you access to weekly Office Hours hosted by WordPress experts. A place where you can ask questions, share your progress, and pick up a few tips along the way.

Lessons include step-by-step videos covering:

- The Foundations (Curating your content and an editorial calendar.)

- Interviews (Recording, editing, and outreach.)

- Configuring Your Site (Integrating your podcast into your site and distributing it.)

- Growing Your Community (Engaging with listeners.)

- Making Money (Monetization basics and preparing for the future.)

Let us take you from “What is podcasting?” to launching a podcast of your own.

Cost: A $99 annual subscription gives you unlimited access to course content, our online community, and virtual sessions.

Join now as our first 100 customers will enjoy 50% off the subscription fee with the code PODCAST50.

State of the Word 2020

State of the Word is an annual keynote address delivered by WordPress project co-founder, Matt Mullenweg. This year’s keynote will be streamed on the WordPress.org blog, Facebook, YouTube, and Twitter on Thursday, Dec 17th, at 1600 UTC. You can view a replay of the event at any time after it airs on any of these platforms.

Sign up here to receive an email reminder, so you don’t miss the State of the Word broadcast!

We will only use this list to share State of the Word updates. Your personal information will not be used for anything else.

New to State of the Word?

If this is your first time hearing of this talk and want to learn more, you’re in luck! Check out previous recordings below.

How To Use MDX Stored In Sanity In A Next.js Website

Recently, my team took on a project to build an online, video-based learning platform. The project, called Jamstack Explorers, is a Jamstack app powered by Sanity and Next.js. We knew that the success of this project relied on making the editing experience easy for collaborators from different companies and roles, as well as retaining the flexibility to add custom components as needed.

To accomplish this, we decided to author content using MDX, which is Markdown with the option to include custom components. For our audience, Markdown is a standard approach to writing content: it’s how we format GitHub comments, Notion docs, Slack messages (kinda), and many other tools. The custom MDX components are optional and their usage is similar to shortcodes in WordPress and templating languages.

To make it possible to collaborate with contributors from anywhere, we decided to use Sanity as our content management system (CMS).

But how could we write MDX in Sanity? In this tutorial, we’ll break down how we set up MDX support in Sanity, and how to load and render that MDX in Next.js — powered website using a reduced example.

TL;DR

If you want to jump straight to the results, here are some helpful links:

- See the example repo used in this tutorial.

- See this approach in action on Jamstack Explorers.

- Check out the Jamstack Explorers source code.

How To Write Content Using MDX In Sanity

Our first step is to get our content management workflow set up. In this section, we’ll walk through setting up a new Sanity instance, adding support for writing MDX, and creating a public, read-only API that we can use to load our content into a website for display.

Create A New Sanity Instance

If you don’t already have a Sanity instance set up, let’s start with that. If you do already have a Sanity instance, skip ahead to the next section.

Our first step is to install the Sanity CLI globally, which allows us to install, configure, and run Sanity locally.

# install the Sanity CLI

npm i -g @sanity/cliIn your project folder, create a new directory called sanity, move into it, and run Sanity’s init command to create a new project.

# create a new directory to contain Sanity files

mkdir sanity

cd sanity/

sanity initThe init command will ask a series of questions. You can choose whatever makes sense for your project, but in this example we’ll use the following options:

- Choose a project name: Sanity Next MDX Example.

- Choose the default dataset configuration (“production”).

- Use the default project output path (the current directory).

- Choose “clean project” from the template options.

Install The Markdown Plugin For Sanity

By default, Sanity doesn’t have Markdown support. Fortunately, there’s a ready-made Sanity plugin for Markdown support that we can install and configure with a single command:

# add the Markdown plugin

sanity install markdownThis command will install the plugin and add the appropriate configuration to your Sanity instance to make it available for use.

Define A Custom Schema With A Markdown Input

In Sanity, we control every content type and input using schemas. This is one of my favorite features about Sanity, because it means that I have fine-grained control over what each content type stores, how that content is processed, and even how the content preview is built.

For this example, we’re going to create a simple page structure with a title, a slug to be used in the page URL, and a content area that expects Markdown.

Create this schema by adding a new file at sanity/schemas/page.js and adding the following code:

export default {

name: 'page',

title: 'Page',

type: 'document',

fields: [

{

name: 'title',

title: 'Page Title',

type: 'string',

validation: (Rule) => Rule.required(),

},

{

name: 'slug',

title: 'Slug',

type: 'slug',

validation: (Rule) => Rule.required(),

options: {

source: 'title',

maxLength: 96,

},

},

{

name: 'content',

title: 'Content',

type: 'markdown',

},

],

};We start by giving the whole content type a name and title. The type of document tells Sanity that this should be displayed at the top level of the Sanity Studio as a content type someone can create.

Each field also needs a name, title, and type. We can optionally provide validation rules and other options, such as giving the slug a max length and allowing it to be generated from the title value.

Add A Custom Schema To Sanity’s Configuration

After our schema is defined, we need to tell Sanity to use it. We do this by importing the schema into sanity/schemas/schema.js, then adding it to the types array passed to createSchema.

// First, we must import the schema creator

import createSchema from 'part:@sanity/base/schema-creator';

// Then import schema types from any plugins that might expose them

import schemaTypes from 'all:part:@sanity/base/schema-type';

+ // Import custom schema types here

+ import page from './page';

// Then we give our schema to the builder and provide the result to Sanity

export default createSchema({

// We name our schema

name: 'default',

// Then proceed to concatenate our document type

// to the ones provided by any plugins that are installed

types: schemaTypes.concat([

- / Your types here! /

+ page,

]),

});This puts our page schema into Sanity’s startup configuration, which means we’ll be able to create pages once we start Sanity up!

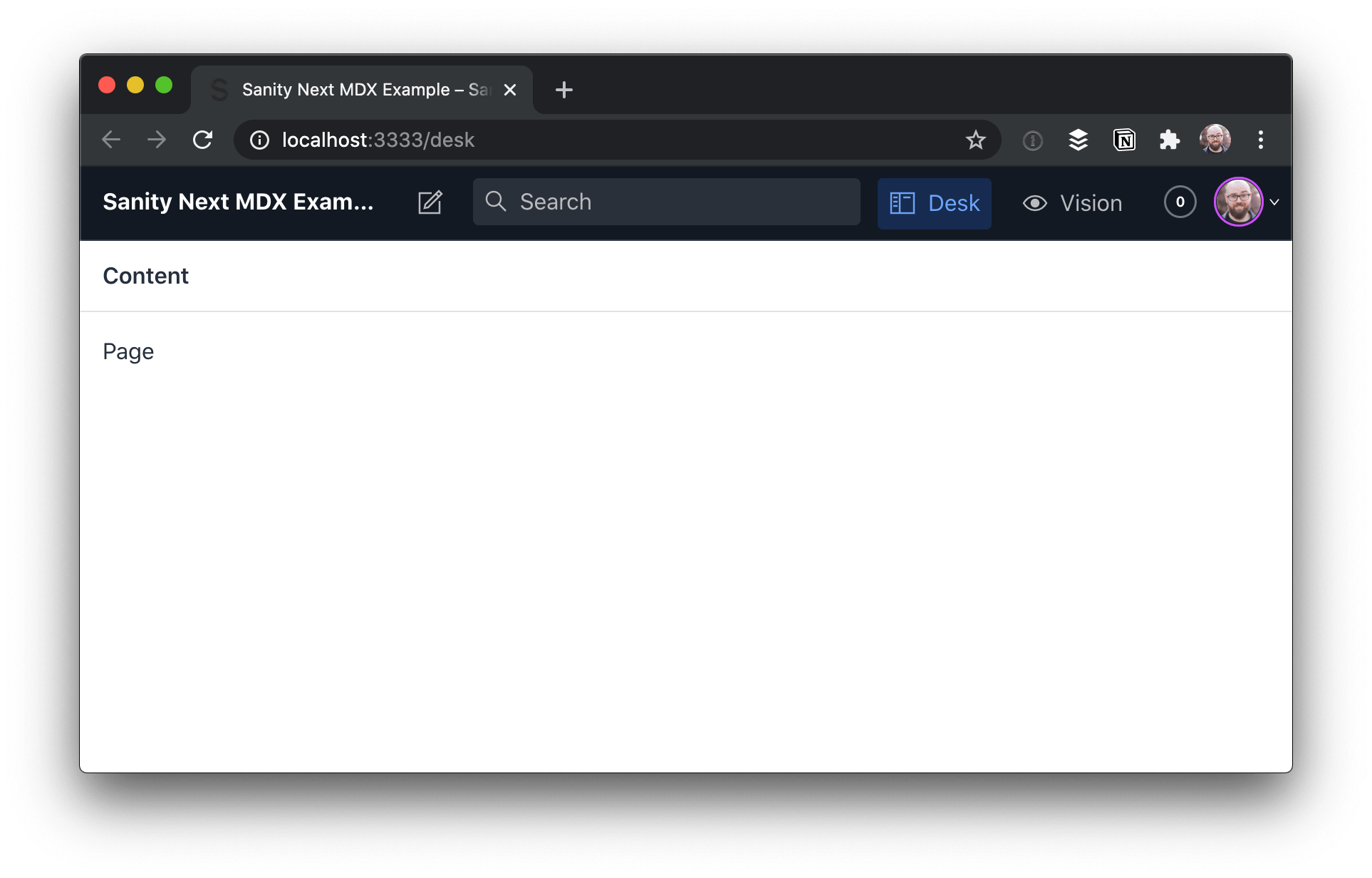

Run Sanity Studio Locally

Now that we have a schema defined and configured, we can start Sanity locally.

sanity startOnce it’s running, we can open Sanity Studio at http://localhost:3333 on our local machine.

When we visit that URL, we’ll need to log in the first time. Use your preferred account (e.g. GitHub) to authenticate. Once you get logged in, you’ll see the Studio dashboard, which looks pretty barebones.

To add a new page, click “Page”, then the pencil icon at the top-left.

Add a title and slug, then write some Markdown with MDX in the content area:

This is written in Markdown.

But what’s this?

<Callout>

Oh dang! Is this a React component in the middle of our content? 😱

</Callout>

Holy buckets! That’s amazing!Heads up! The empty line between the MDX component and the Markdown it contains is required. Otherwise the Markdown won’t be parsed. This will be fixed in MDX v2.

Once you have the content in place, click “Publish” to make it available.

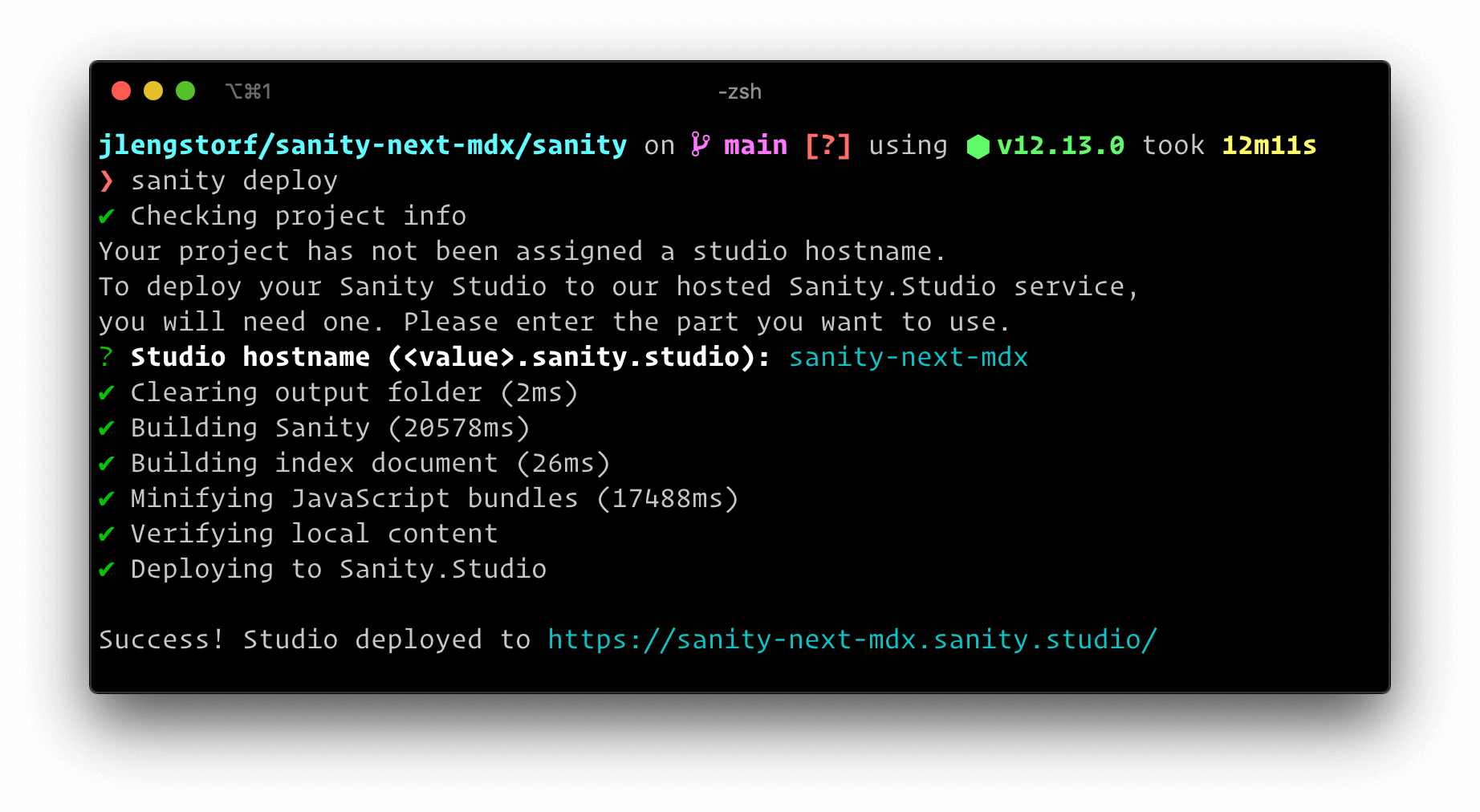

Deploy The Sanity Studio To A Production URL

In order to make edits to the site’s data without having to run the code locally, we need to deploy the Sanity Studio. The Sanity CLI makes this possible with a single command:

sanity deployChoose a hostname for the site, which will be used in the URL. After that, it will be deployed and reachable at your own custom link.

This provides a production URL for content editors to log in and make changes to the site content.

Make Sanity Content Available Via GraphQL

Sanity ships with support for GraphQL, which we’ll use to load our page data into our site’s front-end. To enable this, we need to deploy a GraphQL API, which is another one-liner:

sanity graphql deployWe can choose to enable a GraphQL Playground, which gives us a browser-based data explorer. This is extremely handy for testing queries.

Store the GraphQL URL — you’ll need it to load the data into Next.js!

https://sqqecrvt.api.sanity.io/v1/graphql/production/defaultThe GraphQL API is read-only for published content by default, so we don’t need to worry about keeping this secret — everything that this API returns is published, which means it’s what we want people to see.

Test Sanity GraphQL Queries In The Browser

By opening the URL of our GraphQL API, we’re able to test out GraphQL queries to make sure we’re getting the data we expect. These queries are copy-pasteable into our code.

To load our page data, we can build the following query using the “schema” tab at the right-hand side as a reference.

query AllPages {

allPage {

title

slug {

current

}

content

}

}This query loads all the pages published in Sanity, returning the title, current slug, and content for each. If we run this in the playground by pressing the play button, we can see our page returned.

Now that we’ve got page data with MDX in it coming back from Sanity, we’re ready to build a site using it!

In the next section, we’ll create an Next.js site that loads data from Sanity and renders our MDX content properly.

Display MDX In Next.js From Sanity

In an empty directory, start by initializing a new package.json, then install Next, React, and a package called next-mdx-remote.

# create a new package.json with the default options

npm init -y

# install the packages we need for this project

npm i next react react-dom next-mdx-remoteInside package.json, add a script to run next dev:

{

"name": "sanity-next-mdx",

"version": "1.0.0",

"scripts": {

+ "dev": "next dev"

},

"author": "Jason Lengstorf <jason@lengstorf.com>",

"license": "ISC",

"dependencies": {

"next": "^10.0.2",

"next-mdx-remote": "^1.0.0",

"react": "^17.0.1",

"react-dom": "^17.0.1"

}Create React Components To Use In MDX Content

In our page content, we used the <Callout> component to wrap some of our Markdown. MDX works by combining React components with Markdown, which means our first step is to define the React component our MDX expects.

Create a Callout component at src/components/callout.js:

export default function Callout({ children }) {

return (

<div

style={{

padding: '0 1rem',

background: 'lightblue',

border: '1px solid blue',

borderRadius: '0.5rem',

}}

>

{children}

</div>

);

}This component adds a blue box around content that we want to call out for extra attention.

Send GraphQL Queries Using The Fetch API

It may not be obvious, but you don’t need a special library to send GraphQL queries! It’s possible to send a query to a GraphQL API using the browser’s built-in Fetch API.

Since we’ll be sending a few GraphQL queries in our site, let’s add a utility function that handles this so we don’t have to duplicate this code in a bunch of places.

Add a utility function to fetch Sanity data using the Fetch API at src/utils/sanity.js:

export async function getSanityContent({ query, variables = {} }) {

const { data } = await fetch(

'https://sqqecrvt.api.sanity.io/v1/graphql/production/default',

{

method: 'POST',

headers: {

'Content-Type': 'application/json',

},

body: JSON.stringify({

query,

variables,

}),

},

).then((response) => response.json());

return data;

}The first argument is the Sanity GraphQL URL that Sanity returned when we deployed the GraphQL API.

GraphQL queries are always sent using the POST method and the application/json content type header.

The body of a GraphQL request is a stringified JSON object with two properties: query, which contains the query we want to execute as a string; and variables, which is an object containing any query variables we want to pass into the GraphQL query.

The response will be JSON, so we need to handle that in the .then for the query result, and then we can destructure the result to get to the data inside. In a production app, we’d want to check for errors in the result as well and display those errors in a helpful way, but this is a post about MDX, not GraphQL, so #yolo.

Heads up! The Fetch API is great for simple use cases, but as your app becomes more complex you’ll probably want to look into the benefits of using a GraphQL-specific tool like Apollo or urql.

Create A Listing Of All Pages From Sanity In Next.js

To start, let’s make a list of all the pages published in Sanity, as well as a link to their slug (which won’t work just yet).

Create a new file at src/pages/index.js and put the following code inside:

import Link from 'next/link';

import { getSanityContent } from '../utils/sanity';

export default function Index({ pages }) {

return (

<div>

<h1>This Site Loads MDX From Sanity.io</h1>

<p>View any of these pages to see it in action:</p>

<ul>

{pages.map(({ title, slug }) => (

<li key={slug}>

<Link href={`/${slug}`}>

<a>{title}</a>

</Link>

</li>

))}

</ul>

</div>

);

}

export async function getStaticProps() {

const data = await getSanityContent({

query: `

query AllPages {

allPage {

title

slug {

current

}

}

}

`,

});

const pages = data.allPage.map((page) => ({

title: page.title,

slug: page.slug.current,

}));

return {

props: { pages },

};

}In getStaticProps we call the getSanityContent utility with a query that loads the title and slug of all pages in Sanity. We then map over the page data to create a simplified object with a title and slug property for each page and return that array as a pages prop.

The Index component to display this page receives that page’s prop, so we map over that to output an unordered list of links to the pages.

Start the site with npm run dev and open http://localhost:3000 to see the work in progress.

If we click a page link right now, we’ll get a 404 error. In the next section we’ll fix that!

Generate Pages Programatically In Next.js From CMS Data

Next.js supports dynamic routes, so let’s set up a new file to catch all pages except our home page at src/pages/[page].js.

In this file, we need to tell Next what the slugs are that it needs to generate using the getStaticPaths function.

To load the static content for these pages, we need to use getStaticProps, which will receive the current page slug in params.page.

To help visualize what’s happening, we’ll pass the slug through to our page and log the props out on screen for now.

import { getSanityContent } from '../utils/sanity';

export default function Page(props) {

return <pre>{JSON.stringify(props, null, 2)}</pre>;

}

export async function getStaticProps({ params }) {

return {

props: {

slug: params.page,

},

};

}

export async function getStaticPaths() {

const data = await getSanityContent({

query: `

query AllPages {

allPage {

slug {

current

}

}

}

`,

});

const pages = data.allPage;

return {

paths: pages.map((p) => `/${p.slug.current}`),

fallback: false,

};

}If the server is already running this will reload automatically. If not, run npm run dev and click one of the page links on http://localhost:3000 to see the dynamic route in action.

Load Page Data From Sanity For The Current Page Slug In Next.js

Now that we have the page slug, we can send a request to Sanity to load the content for that page.

Using the getSanityContent utility function, send a query that loads the current page using its slug, then pull out just the page’s data and return that in the props.

export async function getStaticProps({ params }) {

+ const data = await getSanityContent({

+ query: + query PageBySlug($slug: String!) {

+ allPage(where: { slug: { current: { eq: $slug } } }) {

+ title

+ content

+ }

+ }

+,

+ variables: {

+ slug: params.page,

+ },

+ });

+

+ const [pageData] = data.allPage;

return {

props: {

- slug: params.page,

+ pageData,

},

};

}After reloading the page, we can see that the MDX content is loaded, but it hasn’t been processed yet.

Render MDX From A CMS In Next.js With Next-mdx-remote

To render the MDX, we need to perform two steps:

-

For the build-time processing of MDX, we need to render the MDX to a string. This will turn the Markdown into HTML and ensure that the React components are executable. This is done by passing the content as a string into

renderToStringalong with an object containing the React components we want to be available in MDX content. -

For the client-side rendering of MDX, we hydrate the MDX by passing in the rendered string and the React components. This makes the components available to the browser and unlocks interactivity and React features.

While this might feel like doing the work twice, these are two distinct processes that allow us to both create fully rendered HTML markup that works without JavaScript enabled and the dynamic, client-side functionality that JavaScript provides.

Make the following changes to src/pages/[page].js to render and hydrate MDX:

+ import hydrate from 'next-mdx-remote/hydrate';

+ import renderToString from 'next-mdx-remote/render-to-string';

import { getSanityContent } from '../utils/sanity';

+ import Callout from '../components/callout';

- export default function Page(props) {

- return <pre>{JSON.stringify(props, null, 2)}</pre>;

+ export default function Page({ title, content }) {

+ const renderedContent = hydrate(content, {

+ components: {

+ Callout,

+ },

+ });

+

+ return (

+ <div>

+ <h1>{title}</h1>

+ {renderedContent}

+ </div>

+ );

}

export async function getStaticProps({ params }) {

const data = await getSanityContent({

query: `

query PageBySlug($slug: String!) {

allPage(where: { slug: { current: { eq: $slug } } }) {

title

content

}

}

`,

variables: {

slug: params.page,

},

});

const [pageData] = data.allPage;

+ const content = await renderToString(pageData.content, {

+ components: { Callout },

+ });

return {

props: {

- pageData,

+ title: pageData.title,

+ content,

},

};

}

export async function getStaticPaths() {

const data = await getSanityContent({

query: `

query AllPages {

allPage {

slug {

current

}

}

}

`,

});

const pages = data.allPage;

return {

paths: pages.map((p) => `/${p.slug.current}`),

fallback: false,

};

}After saving these changes, reload the browser and we can see the page content being rendered properly, custom React components and all!

Use MDX With Sanity And Next.js For Flexible Content Workflows

Now that this code is set up, content editors can quickly write content using MDX to enable the speed of Markdown with the flexibility of custom React components, all from Sanity! The site is set up to generate all the pages published in Sanity, so unless we want to add new custom components we don’t need to touch the Next.js code at all to publish new pages.

What I love about this workflow is that it lets me keep my favorite parts of several tools: I really like writing content in Markdown, but my content also needs more flexibility than the standard Markdown syntax provides; I like building websites with React, but I don’t like managing content in Git.

Beyond this, I also have access to the huge amount of customization made available in both the Sanity and React ecosystems, which feels like having my cake and eating it, too.

If you’re looking for a new content management workflow, I hope you enjoy this one as much as I do!

What’s Next?

Now that you’ve got a Next site using MDX from Sanity, you may want to go further with these tutorials and resources:

- See the example repo used in this tutorial.

- Deploy a Next.js Site to Netlify.

- See this approach in action on Jamstack Explorers.

- Check out a production implementation of this in the Jamstack Explorers repo.

- Learn about Next from Cassidy Williams.

- Check out the Sanity documentation.

What will you build with this workflow? Let me know on Twitter!

How to Create a Simple Event Calendar with Sugar Calendar

Do you want to add an event calendar to your WordPress website?

There are lots of different plugins and calendar apps that can do this, but some are much too complicated or don’t work very well with WordPress.

In this article, we’ll show you how to easily create a simple event calendar in WordPress with Sugar Calendar.

How to Create a Simple Event Calendar with Sugar Calendar

Sugar Calendar is a simple and lightweight events calendar plugin for WordPress built by Pippin Williamson and the team behind Easy Digital Downloads.

Since we were looking for a lightweight event calendar plugin, we decided to give Sugar Calendar a try, and found it quite easy to use.

Here’s how to create an event calendar in WordPress with Sugar Calendar.

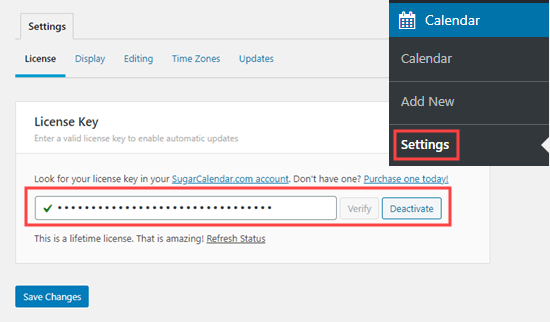

First thing you need to do is install and activate the Sugar Calendar plugin on your website. For more details, see our step by step guide on how to install a WordPress plugin.

Upon activation, go to the Calendar » Settings page in your WordPress admin. Here, you need to enter your license key. You will find this in your account area on the Sugar Calendar website.

Once you’re done, click the Save Changes button to make sure your license key is saved.

Creating a Calendar in Sugar Calendar

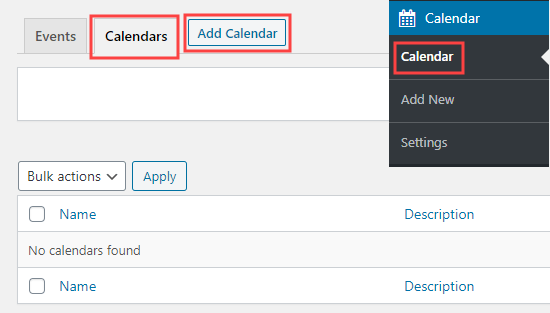

Sugar Calendar divides the functionality into two main sections: Calendars and Events.

You can create multiple calendars, and then add different events or actvities to each calendar accordingly.

To get started, click on the ‘Calendar’ menu item page in your WordPress admin sidebar. After that, you need to select the Calendar tab then click on the ‘Add New Calendar’ button to create a new calendar.

You will then see a popup box for adding your new calendar.

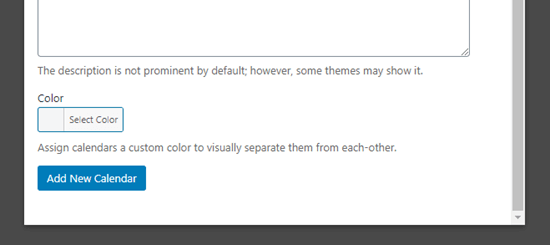

You need to give your calendar a name and a ‘slug‘ which will become the end part of the calendar’s URL (web address).

There’s a box where you can describe your calendar. The description is optional, and you can leave it blank if you want.

Below the description, you can select a color for your calendar. This is very useful if you’re creating multiple calendars because this will make it easier to identify different calendars on your WordPress website.

If you’re just creating one calendar, then you don’t need to set a color.

When you’re done, click the ‘Add New Calendar’ button to create your calendar.

Adding Events in Sugar Calendar

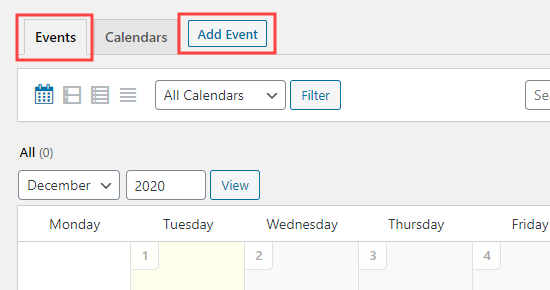

Now, it’s time to add some events to your calendar. Just click on the Events tab then click the ‘Add Event’ button to create your first event.

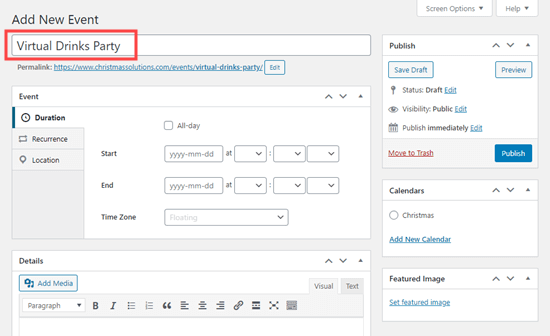

The ‘Add New Event’ screen has space for all the information about your event. Go ahead and enter the name for your event at the top.

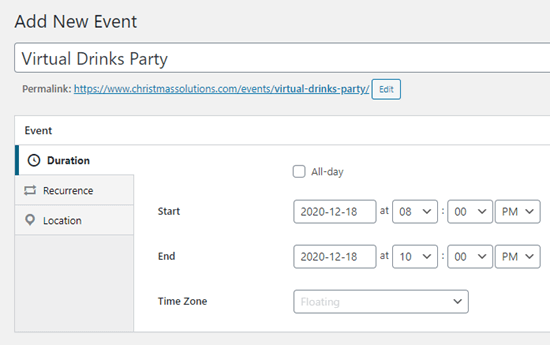

Below this, you can set the start time and end time for your event.

Your event can be on a single day or across multiple days. If it’s an all-day event, then simply check the ‘All-day’ box.

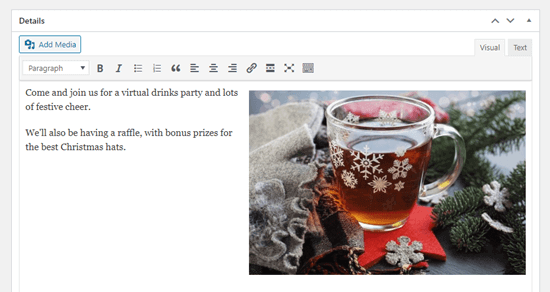

Next, go ahead and add more information about your event in the Details box. If you would like to include images or photos here, go ahead and add those using the ‘Add Media’ buttons.

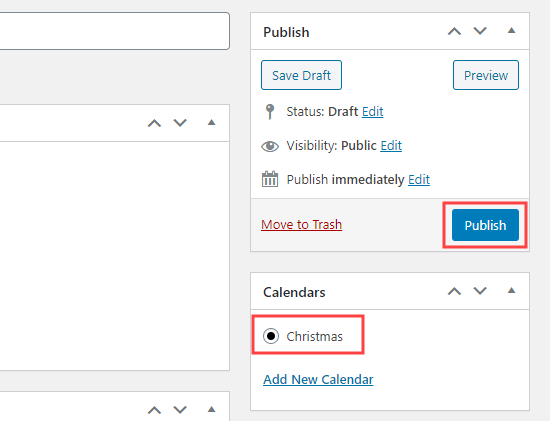

On the right-hand side of the screen, you need to click on the calendar that you want to add your event onto. You can also create a calendar here if you haven’t done so already.

When you’re ready, go ahead and click the Publish button.

You can repeat this process to add more events to your calendar.

Putting Your Events Calendar on Your Website

You can add your calendar to any page or post on your website. You can even use the Sugar Calendar widget to add it to your sidebar.

We’re going to add our calendar to a new page on our demo site.

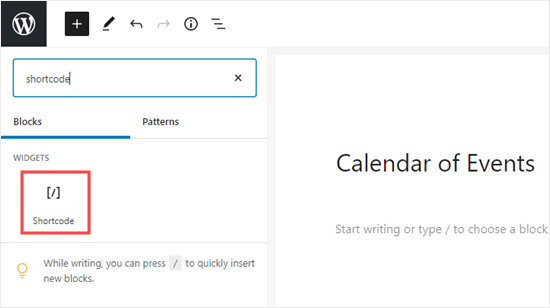

First, go to Pages » Add New. Then, enter a title for your page and add a shortcode box to your page.

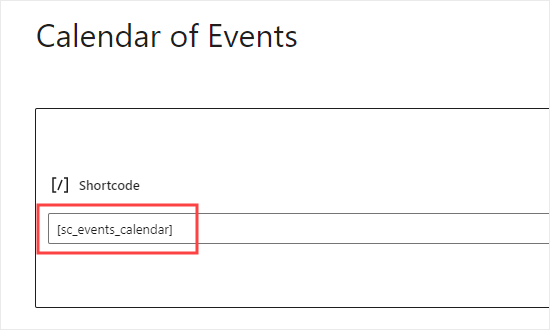

Next, copy the shortcode [sc_events_calendar] into the shortcode block.

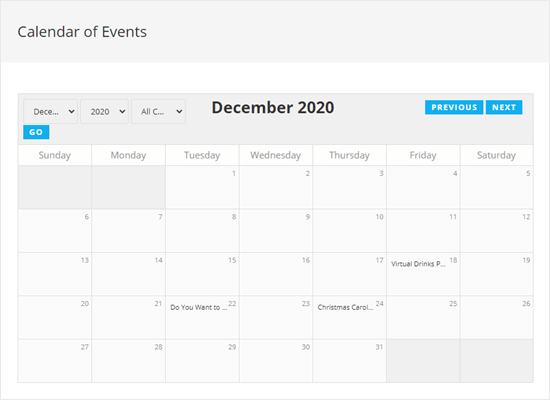

Now, it’s time to preview or publish your page. You will then see the calendar of events on your website.

Visitors on your website can click on an event to see the full details.

Note: This events page will use the fonts, colors, etc from your theme. That means it will match the posts and pages on your blog.

We hope this article helped you learn how to create a simple event calendar with Sugar Calendar. You might also like our article on the best email marketing services and best push notification software, so you can keep your users updated about new events and activities.

If you liked this article, then please subscribe to our YouTube Channel for WordPress video tutorials. You can also find us on Twitter and Facebook.

The post How to Create a Simple Event Calendar with Sugar Calendar appeared first on WPBeginner.

Building A Conversational N.L.P Enabled Chatbot Using Google’s Dialogflow

Ever since ELIZA (the first Natural Language Processing computer program brought to life by Joseph Weizenbaum in 1964) was created in order to process user inputs and engage in further discussions based on the previous sentences, there has been an increased use of Natural Language Processing to extract key data from human interactions. One key application of Natural language processing has been in the creation of conversational chat assistants and voice assistants which are used in mobile and web applications to act as customer care agents attending to the virtual needs of customers.

In 2019, the Capgemini Research Institute released a report after conducting a survey on the impact which chat assistants had on users after being incorporated by organizations within their services. The key findings from this survey showed that many customers were highly satisfied with the level of engagement they got from these chat assistants and that the number of users who were embracing the use of these assistants was fast growing!

To quickly build a chat assistant, developers and organizations leverage SaaS products running on the cloud such as Dialogflow from Google, Watson Assistant from IBM, Azure Bot Service from Microsoft, and also Lex from Amazon to design the chat flow and then integrate the natural language processing enabled chat-bots offered from these services into their own service.

This article would be beneficial to developers interested in building conversational chat assistants using Dialogflow as it focuses on the Dialogflow itself as a Service and how chat assistants can be built using the Dialogflow console.

Note: Although the custom webhooks built within this article are well explained, a fair understanding of the JavaScript language is required as the webhooks were written using JavaScript.

Dialogflow

Dialogflow is a platform that simplifies the process of creating and designing a natural language processing conversational chat assistant which can accept voice or text data when being used either from the Dialogflow console or from an integrated web application.

To understand how Dialogflow simplifies the creation of a conversational chat assistant, we will use it to build a customer care agent for a food delivery service and see how the built chat assistant can be used to handle food orders and other requests of the service users.

Before we begin building, we need to understand some of the key terminologies used on Dialogflow. One of Dialogflow’s aim is to abstract away the complexities of building a Natural Language Processing application and provide a console where users can visually create, design, and train an AI-powered chatbot.

Dialog Flow Terminologies

Here is a list of the Dialogflow terminologies we will consider in this article in the following order:

-

Agent

An agent on Dialogflow represents the chatbot created by a user to interact with other end-users and perform data processing operations on the information it receives. Other components come together to form an agent and each time one of these components is updated, the agent is immediately re-trained for the changes to take effect.User’s who want to create a full-fledged conversational chatbot within the quickest time possible can select an agent from the prebuilt agents which can be likened to a template which contains the basic intents and responses needed for a conversational assistant.

Note: A conversational assistant on Dialogflow will now be referred to as an “agent” while someone else asides the author of the assistant who interacts with it would be referred to as an “end-user”.

-

Intent

Similar to its literal meaning, the intent is the user’s end goal in each sentence when interacting with an agent. For a single agent, multiple intents can be created to handle each sentence within a conversation and they are connected together using Contexts.From the intent, an agent is able to understand the end-goal of a sentence. For example, an agent created to process food orders from customers would be to recognize the end-goal of a customer to place an order for a meal or get recommendations on the available meals from a menu using the created intents.

-

Entity

Entities are a means by which Dialogflow processes and extracts specific data from an end-user’s input. An example of this is a Car entity added to an intent. Names of vehicles would be extracted from each sentence input as the Car entity.By default, an agent has some System entities which have predefined upon its creation. Dialogflow also has the option to define custom entities and add values recognizable within this entity.

-

Training Phrase

The training phrases is a major way in which an agent is able to recognize the intent of an end-user interacting with the agent. Having a large number of training phrases within an intent increases the accuracy of the agent to recognize an intent, in fact Dialogflow’s documentation on training phases recommends that “at least 10-20” training phrases be added to a created intent.To make training phrases more reusable, dialogflow gives the ability to annotate specific words within the training phrase. When a word within a phrase is annotated, dialogflow would recognize it as a placeholder for values that would be provided in an end-user’s input.

-

Context

Contexts are string names and they are used to control the flow of a conversation with an agent. On each intent, we can add multiple input contexts and also multiple output contexts. When the end-user makes a sentence that is recognized by an intent the output contexts become active and one of them is used to match the next intent.To understand contexts better, we can illustrate context as the security entry and exit door, while the intent as the building. The input context is used when coming into the building and it accepts visitors that have been listed in the intent while the exit door is what connects the visitors to another building which is another intent.

-

Knowledge base

A knowledge base represents a large pool of information where an agent can fetch data when responding to an intent. This could be a document in any format such astxt,pdf,csvamong other supported document types. In machine learning, a knowledge base could be referred to as a training dataset.An example scenario where an agent might refer to a knowledge base would be where an agent is being used to find out more details about a service or business. In this scenario, an agent can refer to the service’s Frequently Asked Questions as its knowledge base.

-

Fulfillment

Dialogflow’s Fulfillment enables an agent to give a more dynamic response to a recognized intent rather than a static created response. This could be by calling a defined service to perform an action such as creating or retrieving data from a database.An intent’s fulfillment is achieved through the use of a webhook. Once enabled, a matched intent would make an API request to the webhook configured for the dialogflow agent.

Now, that we have an understanding of the terminologies used with Dialogflow, we can move ahead to use the Dialogflow console to create and train our first agent for a hypothetical food service.

Using The Dialogflow Console

Note: Using the Dialogflow console requires that a Google account and a project on the Google Cloud Platform is created. If unavailable, a user would be prompted to sign in and create a project on first use.

The Dialogflow console is where the agent is created, designed, and trained before integrating with other services. Dialogflow also provides REST API endpoints for users who do not want to make use of the console when building with Dialogflow.

While we go through the console, we will gradually build out the agent which would act as a customer care agent for a food delivery service having the ability to list available meals, accept a new order and give information about a requested meal.

The agent we’ll be building will have the conversation flow shown in the flow chart diagram below where a user can purchase a meal or get the list of available meals and then purchase one of the meals shown.

Creating A New Agent

Within every newly created project, Dialogflow would prompt the first time user to create an agent which takes the following fields:

- A name to identify the agent.

- A language which the agent would respond in. If not provided the default of English is used.

- A project on the Google Cloud to associate the agent with.

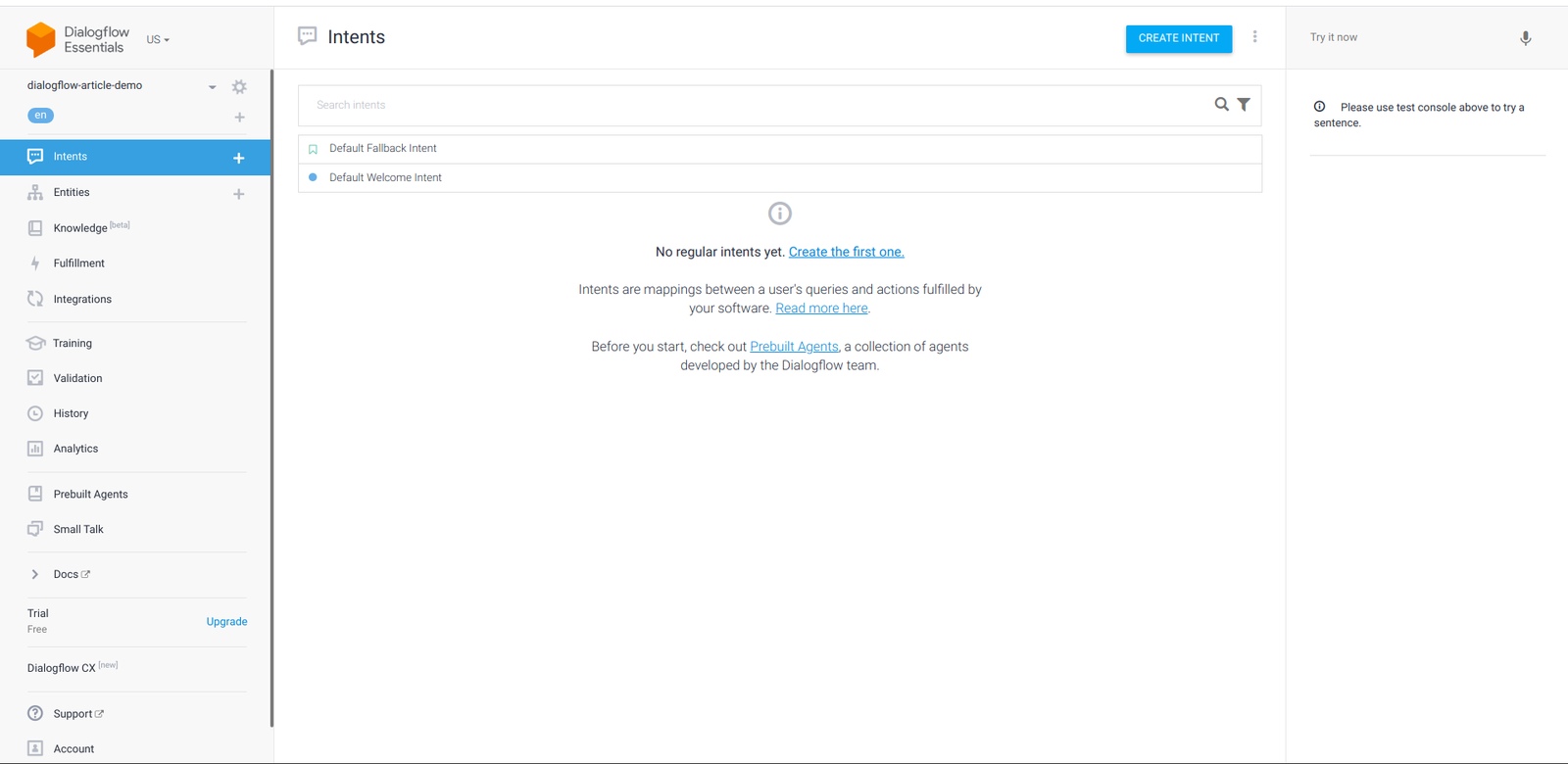

Immediately after we click on the create button after adding the values of the fields above, a new agent would be saved and the intents tab would be shown with the Default fallback and Default Welcome intent as the only two available intents which are created by default with every agent on Dialogflow.

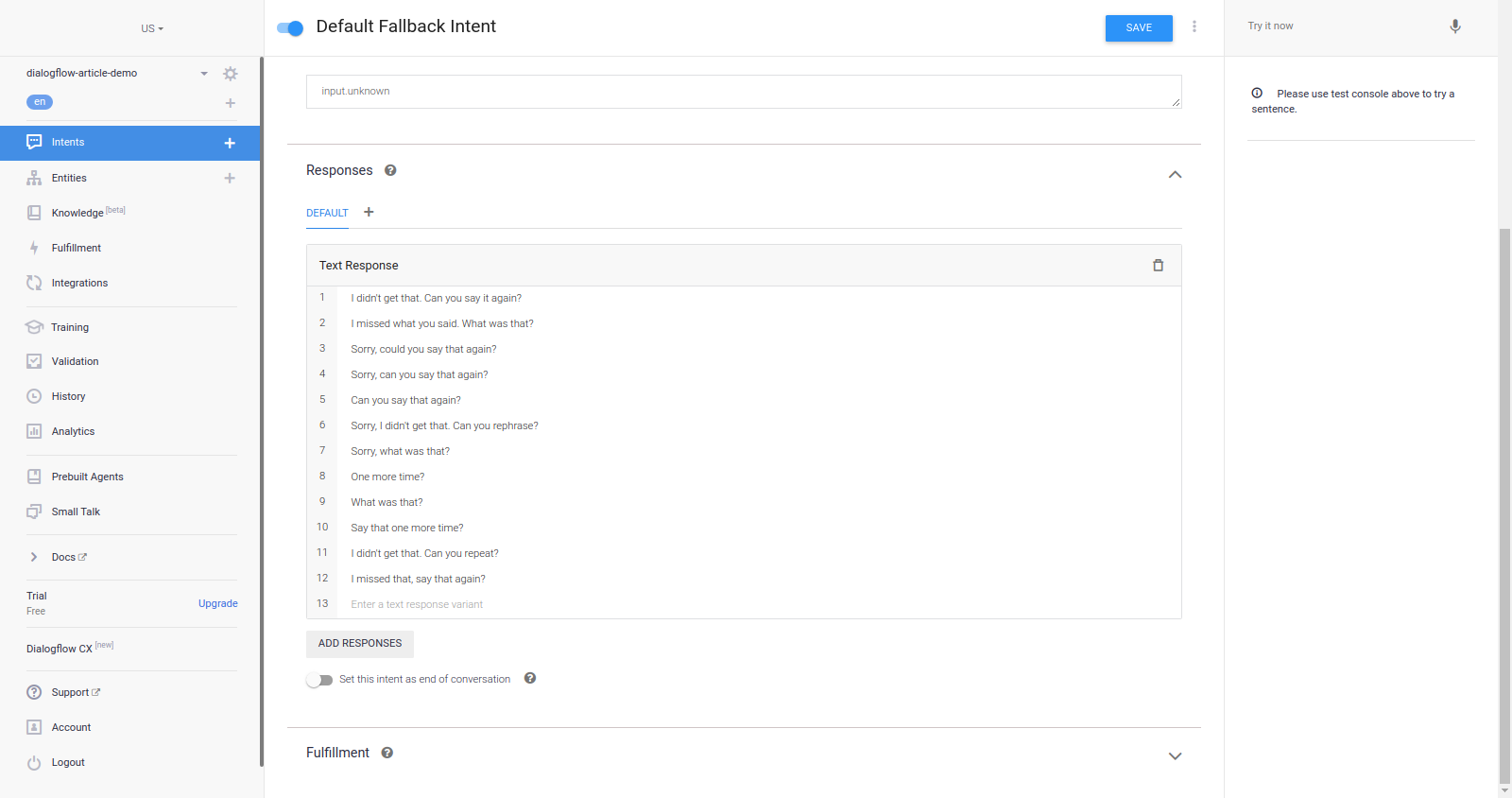

Exploring the Default fallback intent, we can see it has no training phrase but has sentences such as “Sorry, could you say that again?”, “What was that?”, “Say that one more time?” as responses to indicate that the agent was not able to recognize a sentence which has been made by an end-user. During all conversations with the agent, these responses are only used when the agent cannot recognize a sentence typed or spoken by a user.

While the sentences above are sufficient for indicating that agent does not understand the last typed sentence, we would like to aid the end-user by giving them some more information to hint the user on what the agent can recognize. To do this, we replace all the listed sentences above with the following ones and click the Save button for the agent to be retrained.

I didn't get that. I am Zara and I can assist you in purchasing or learning more about the meals from Dialogflow-food-delivery service. What would you like me to do?

I missed what you said. I'm Zara here and I can assist you in purchasing or learning more about the meals from Dialogflow-food-delivery service. What would you like me to do?

Sorry, I didn't get that. Can you rephrase it? I'm Zara by the way and I can assist you in purchasing or learning more about the meals from Dialogflow-food-delivery service.

Hey, I missed that I'm Zara and I can assist you in purchasing or learning more about the meals from Dialogflow-food-delivery service. What would you like me to do?From each of the four sentences above, we see can observe that the agent could not recognize what the last sentence made was and also a piece of information on what the agent can do thus hinting the user on what to type next in order to continue the conversation.

Moving next to the Default Welcome Intent, the first section on the intent page is the Context section and expanding it we can see both the input and output contexts are blank. From the conversation flow of the agent shown previously, we want an end-user to either place a meal order or request a list of all available meals. This would require the two following new output contexts they would each become active when this intent is matched;

-

awaiting_order_request

This would be used to match the intent handling order requests when an end-user wants to place an order for a meal. -

awaiting_info_request

This would be used to match the intent that retrieves data of all the meals when an end-user wants to know the available meals.

After the context section is the intent’s Events and we can see it has the Welcome event type added to the list of events indicating that this intent will be used first when the agent is loaded.

Coming next are the Training Phrases for the intent. Due to being created by default, it already has 16 phrases that an end-user would likely type or say when they interact with the agent for the first time.

When an end-user types or makes a sentence similar to those listed in the training phrases above, the agent would respond using a picked response from the Responses list section shown below:

Each of the responses above is automatically generated for every agent on Dialogflow. Although they are grammatically correct, we would not use them for our food agent. Being a default intent that welcomes an end-user to our agent, a response from the agent should tell what organization it belongs to and also list its functionalities in a single sentence.

We would delete all the responses above and replace them with the ones below to better help inform an end-user on what to do next with the agent.

1. Hello there, I am Zara and I am here to assist you to purchase or learn about the meals from the Dialogflow-food-delivery service. What would you like me to do?

2. Hi, I am Zara and I can assist you in purchasing or learning more about the meals from the Dialogflow-food-delivery service. What would you like me to do?From the two responses above, we can see it tells an end-user what the name of the bot is, the two things the agent can do, and lastly, it pokes the end-user to take further action. Taking further action further from this intent means we need to connect the Default Welcome Intent to another. This is possible on Dialogflow using context.

When we add and save those two phrases above, dialogflow would immediately re-train the agent so I can respond using any one of them.

Next, we move on to create two more intents to handle the functionalities which we have added in the two responses above. One to purchase a food item and the second to get more information about meals from our food service.

Creating list-meals intent:

Clicking the + ( add ) icon from the left navigation menu would navigate to the page for creating new intents and we name this intent list-available-meals.

From there we add an output context with the name awaiting-order-request. This output context would be used to link this intent to the next one where they order a meal as we expect an end-user to place an order for a meal after getting the list of meals available.

Moving on to the Training Phrases section on the intent page, we will add the following phrases provided by the end-user in order to find out which meals are available.

Hey, I would like to know the meals available.

What items are on your menu?

Are there any available meals?

I would like to know more about the meals you offer.Next, we would add just the single fallback response below to the Responses section;

Hi there, the list of our meals is currently unavailable. Please check back in a few minutes as the items on the list are regularly updated.From the response above we can observe that it indicates that the meal’s list is unavailable or an error has occurred somewhere. This is because it is a fallback response and would only be used when an error occurs in fetching the meals. The main response would come as a fulfillment using the webhooks option which we will set up next.

The last section in this intent page is the Fulfillment section and it is used to provide data to the agent to be used as a response from an externally deployed API or source. To use it we would enable the Webhook call option in the Fulfillment section and set up the fulfillment for this agent from the fulfillment tab.

Managing Fulfillment:

From the Fulfillment tab on the console, a developer has the option of using a webhook which gives the ability to use any deployed API through its endpoint or use the Inline Code editor to create a serverless application to be deployed as a cloud function on the Google Cloud. If you would like to know more about serverless applications, this article provides an excellent guide on getting started with serverless applications.

Each time an end-user interacts with the agent and the intent is matched, a POST) request would be made to the endpoint. Among the various object fields in the request body, only one is of concern to us, i.e. the queryResult object as shown below:

{

"queryResult": {

"queryText": "End-user expression",

"parameters": {

"param-name": "param-value"

},

},

}While there are other fields in the queryResult such as a context, the parameters object is more important to us as it holds the parameter extracted from the user’s text. This parameter would be the meal a user is requesting for and we would use it to query the food delivery service database.

When we are done setting up the fulfillment, our agent would have the following structure and flow of data to it:

From the diagram above, we can observe that the cloud function acts as a middleman in the entire structure. The Dialogflow agent sends the parameter extracted from an end user’s text to the cloud function in a request payload and the cloud function, in turn, queries the database for the document using the received name and sends back the queried data in a response payload to the agent.

To start an implementation of the design system above, we would begin with creating the cloud function locally in a development machine then connect it to our dialogflow agent using the custom webhook option. After it has been tested, we can switch to using the inline editor in the fulfillment tab to create and deploy a cloud function to work with it. We begin this process by running the following commands from the command line:

# Create a new project and ( && ) move into it.

mkdir dialogflow-food-agent-server && cd dialogflow-food-agent-server

# Create a new Node project

yarn init -y

# Install needed packages

yarn add mongodb @google-cloud/functions-framework dotenvAfter installing the needed packages, we modify the generated package.json file to include two new objects which enable us to run a cloud function locally using the Functions Framework.

// package.json

{

"main": "index.js",

"scripts": {

"start": "functions-framework --target=foodFunction --port=8000"

},

}

The start command in the scripts above tells the functions Framework to run the foodFunction in the index.js file and also makes it listen and serve connections through our localhost on port 8000.

Next is the content of the index.js file which holds the function; we’ll make use of the code below since it connects to a MongoDB database and queries the data using the parameter passed in by the Dialogflow agent.

require("dotenv").config();

exports.foodFunction = async (req, res) => {

const { MongoClient } = require("mongodb");

const CONNECTION_URI = process.env.MONGODB_URI;

// initate a connection to the deployed mongodb cluster

const client = new MongoClient(CONNECTION_URI, {

useNewUrlParser: true,

});

client.connect((err) => {

if (err) {

res

.status(500)

.send({ status: "MONGODB CONNECTION REFUSED", error: err });

}

const collection = client.db(process.env.DATABASE_NAME).collection("Meals");

const result = [];

const data = collection.find({});

const meals = [

{

text: {

text: [

We currently have the following 20 meals on our menu list. Which would you like to request for?,

],

},

},

];

result.push(

data.forEach((item) => {

const { name, description, price, image_uri } = item;

const card = {

card: {

title: ${name} at $${price},

subtitle: description,

imageUri: image_uri,

},

};

meals.push(card);

})

);

Promise.all(result)

.then((_) => {

const response = {

fulfillmentMessages: meals,

};

res.status(200).json(response);

})

.catch((e) => res.status(400).send({ error: e }));

client.close();

});

};

From the code snippet above we can see that our cloud function is pulling data from a MongoDB database, but let’s gradually step through the operations involved in pulling and returning this data.

-

First, the cloud function initiates a connection to a MongoDB Atlas cluster, then it opens the collection storing the meal category documents within the database being used for the food-service on the cluster.

-

Next, using the parameter passed into the request from the user’s input, we run a find method on the collection to get which then returns a cursor which we further iterate upon to get all the MongoDB documents within the collection containing the data.

- We model the data returned from MongoDB into Dialogflow’s Rich response message object structure which displays each of the meal items to the end-user as a card with an image, title, and a description.

- Finally, we send back the entire data to the agent after the iteration in a JSON body and end the function’s execution with a

200status code.

Note: The Dialogflow agent would wait for a response after a request has been sent within a frame of 5 seconds. This waiting period is when the loading indicator is shown on the console and after it elapses without getting a response from the webhook, the agent would default to using one of the responses added in the intent page and return a DEADLINE EXCEEDED error. This limitation is worth taking note of when designing the operations to be executed from a webhook. The API error retries section within the Dialogflow best practices contains steps on how to implement a retry system.

Now, the last thing needed is a .env file created in the project directory with the following fields to store the environment variables used in the index.js.

#.env

MONGODB_URI = "MONGODB CONNECTION STRING"

DATABASE_NAME = ""At this point, we can start the function locally by running yarn start from the command line in the project’s directory. For now, we still cannot make use of the running function as Dialogflow only supports secure connections with an SSL certificate, and where Ngrok comes into the picture.

Using Ngrok, we can create a tunnel to expose the localhost port running the cloud function to the internet with an SSL certificate attached to the secured connection using the command below from a new terminal;

ngrok http -bind-tls=true 8000This would start the tunnel and generate a forwarding URL which would be used as an endpoint to the function running on a local machine.

Note: The extra -bind-tls=true argument is what instructs Ngrok to create a secured tunnel rather than the unsecured connection which it creates by default.

Now, we can copy the URL string opposite the forwarding text in the terminal and paste in the URL input field which is found in the Webhook section, and then save it.

To test all that has been done so far, we would make a sentence to the Dialogflow agent requesting the list of meals available using the Input field at the top right section in the Dialogflow console and watch how it waits for and uses a response sent from the running function.

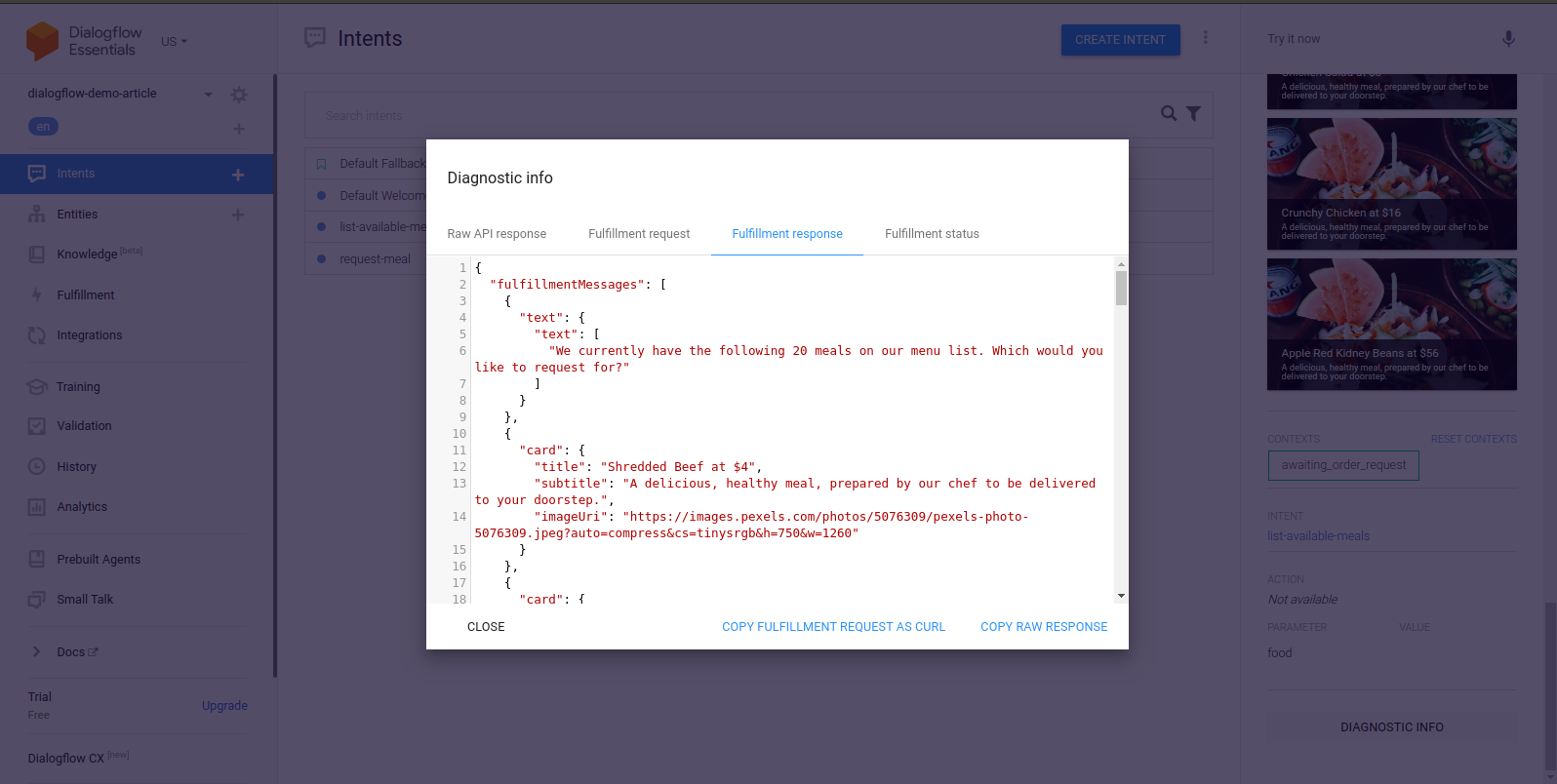

Starting from the center placed terminal in the image above, we can the series of POST requests made to the function running locally and on the right-hand side the data response from the function formatted into cards.

If for any reason a webhook request becomes unsuccessful, Dialogflow would resolve the error by using one of the listed responses. However, we can find out why the request failed by using the Diagnostic Info tool updated in each conversation. Within it are the Raw API response, Fulfillment request, Fulfillment response, and Fulfillment status tabs containing JSON formatted data. Selecting the Fulfillment response tab we can see the response from the webhook which is the cloud function running on our local machine.

At this point, we expect a user to continue the conversation with an order of one of the listed meals. We create the last intent for this demo next to handle meal orders.

Creating Request-meal Intent:

Following the same steps used while creating the first intent, we create a new intent using the console and name it request-meal and add an input context of awaiting_order_request to connect this intent from either the Default Welcome intent or the list-available meals intent.

Within the training phrase section, we make use of the following phrases,

Hi there, I'm famished, can I get some food?

Yo, I want to place an order for some food.

I need to get some food now.

Dude, I would like to purchase $40 worth of food.

Hey, can I get 2 plates of food?Reading through the phrases above, we can observe they all indicate one thing — the user wants food. In all of the phrases listed above, the name or type of food is not specified but rather they are all specified as food. This is because we want the food to be dynamic value, if we were to list all the food names we certainly would need to have a very large list of training phrases. This also applies to the amount and price of the food being ordered, they would be annotated and the agent would be able to recognize them as a placeholder for the actual values within an input.

To make a value within a phrase dynamic, dialogflow provides entities. Entities represent common types of data, and in this intent, we use entities to match several food types, various price amounts, and quantity from an end user’s sentence to request.

From the training phrases above, dialogflow would recognize $40 as `@sys.unit-currencywhich is under the amounts-with-units category of the [system entities list](https://cloud.google.com/dialogflow/es/docs/entities-system) and **2** as@numberunder the number category of the [system entities list](https://cloud.google.com/dialogflow/es/docs/entities-system). However,food` is not a not a recognized system entity. In a case such as this, dialogflow gives developers the option to create a custom entity to be used.

Managing Entities

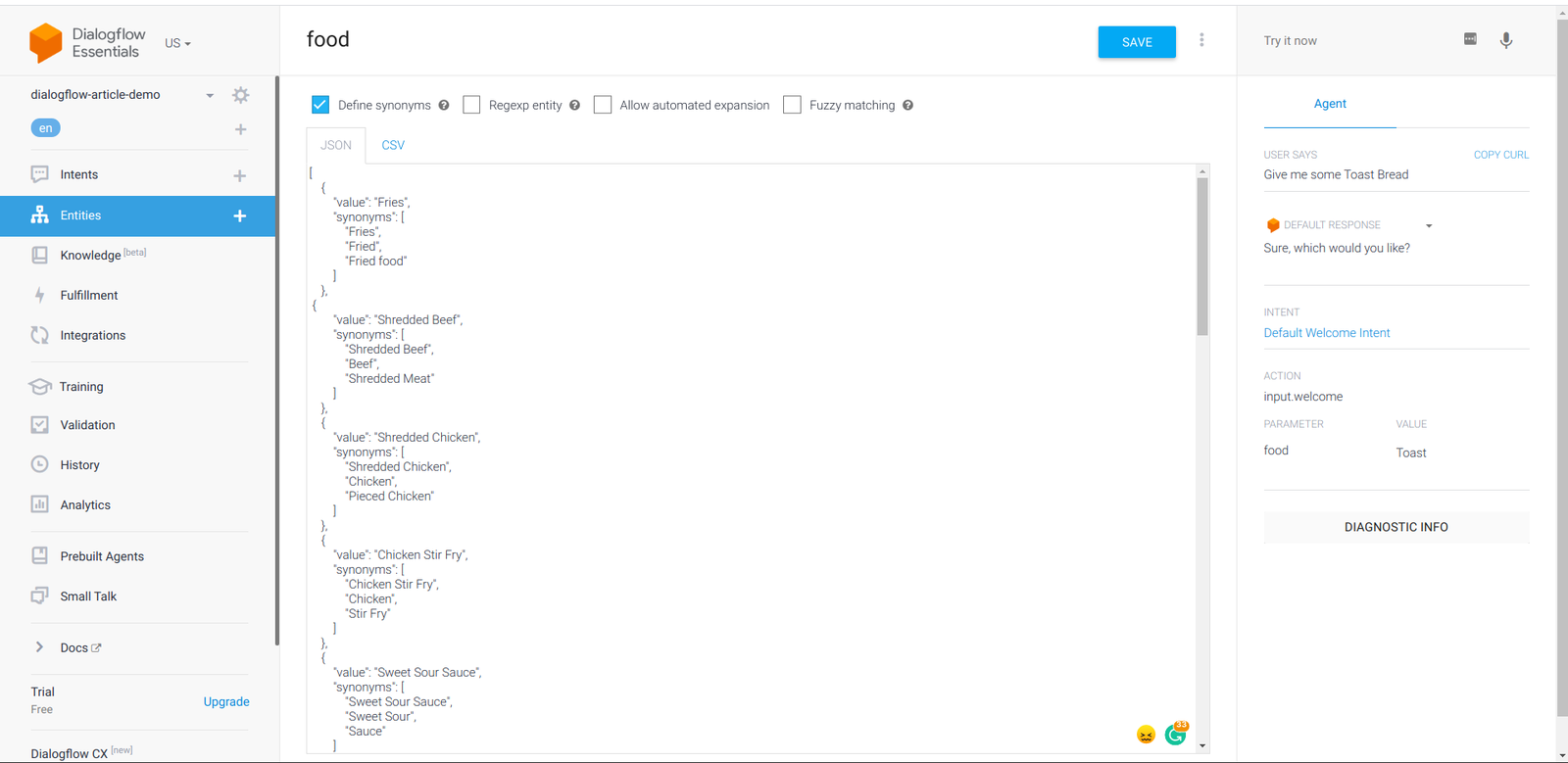

Double-clicking on food would pop up the entities dropdown menu, at the bottom of the items in the dropdown we would find the Create new entity button, and clicking it would navigate to the Entities tab on the dialogflow console, where we can manage all entities for the agent.

When at the entities tab, we name this new entity as food then at the options dropdown located at the top navigation bar beside the Save button we have the option to switch the entities input to a raw edit mode. Doing this would enable us to add several entity values in either a json or csv format rather than having to add the entities value one after the other.

After the edit mode has been changed, we would copy the sample JSON data below into the editor box.

// foods.json

[

{

"value": "Fries",

"synonyms": [

"Fries",

"Fried",

"Fried food"

]

},

{

"value": "Shredded Beef",

"synonyms": [

"Shredded Beef",

"Beef",

"Shredded Meat"

]

},

{

"value": "Shredded Chicken",

"synonyms": [

"Shredded Chicken",

"Chicken",

"Pieced Chicken"

]

},

{

"value": "Sweet Sour Sauce",

"synonyms": [

"Sweet Sour Sauce",

"Sweet Sour",

"Sauce"

]

},

{

"value": "Spring Onion",

"synonyms": [

"Spring Onion",

"Onion",

"Spring"

]

},

{

"value": "Toast",

"synonyms": [

"Toast",

"Toast Bread",

"Toast Meal"

]

},

{

"value": "Sandwich",

"synonyms": [

"Sandwich",

"Sandwich Bread",

"Sandwich Meal"

]

},

{

"value": "Eggs Sausage Wrap",

"synonyms": [

"Eggs Sausage Wrap",

"Eggs Sausage",

"Sausage Wrap",

"Eggs"

]

},

{

"value": "Pancakes",

"synonyms": [

"Pancakes",

"Eggs Pancakes",

"Sausage Pancakes"

]

},

{

"value": "Cashew Nuts",

"synonyms": [

"Cashew Nuts",

"Nuts",

"Sausage Cashew"

]

},

{

"value": "Sweet Veggies",

"synonyms": [

"Sweet Veggies",

"Veggies",

"Sweet Vegetables"

]

},

{